AI Product Management as Governance Design

As AI products move from promising demos to critical infrastructure, the role of the product manager changes in a meaningful way. You are no longer just responsible for defining features and shipping roadmap items. You are responsible for shaping how the system behaves in the real world.

That shift matters because AI products are not ordinary software products. They can generate outputs, make recommendations, trigger actions, and influence decisions in ways that are probabilistic rather than deterministic. That means product management has to account not only for what the system does, but also for when it should defer, how it should escalate, and how its behavior will be monitored after launch.

Why AI product management is different

Traditional product management asks a familiar question: what should we build?

AI product management still asks that question, but it adds several more:

- What should the system do on its own?

- When should it defer to a human?

- What happens when it is wrong?

- How do we know it is still behaving correctly after launch?

That makes the PM’s job broader, not different in kind. The product manager still defines the problem, the user, and the value. But in AI, they also have to define the boundaries of autonomy, the boundaries of risk, and the boundaries of trust.

graph LR

A[Business Goal] --> B[User Workflow]

B --> C[Model Behavior]

C --> D[Governance Controls]

D --> E[Production Monitoring]

style A fill:#FBF7F0,stroke:#1B1917,stroke-width:2px

style E fill:#FBF7F0,stroke:#1B1917,stroke-width:2px

Why governance belongs in product

In traditional software, governance is often treated as a downstream review function. Legal or compliance steps in late, checks the box, and signs off. That model breaks down in AI.

If a model hallucination, leaks data, or produces biased outputs, that is not just a governance issue. It is a product failure. The product itself is behaving in a way that creates risk. That means governance cannot sit outside the product. It has to be designed into it.

The best AI product managers understand that governance is not separate from product design. It is part of the product’s operating logic.

The Audit Note: The fiduciary accountability of an AI Product Manager is measured by the deterministic range of the model's behavior under edge conditions.

The governance designer mindset

An AI product manager should think like a governance designer because they sit at the intersection of three domains:

Technical feasibility

What can the system actually do, and where are its failure modes?

User experience

How does the system behave when it is uncertain, and how does it hand off to a human when needed?

Business risk

What is the cost of being wrong, and what controls are needed to keep the product safe and trustworthy?

That combination is what makes the AI PM role different from a conventional PM role. You are not only deciding what gets built. You are deciding how the product behaves under uncertainty.

flowchart LR

Start([Task Initiated]) --> Model[AI Generates Response]

Model --> Threshold{Confidence > Threshold?}

Threshold -- Yes --> Auto[Automatic Response]

Threshold -- No --> Trigger[Human Review Trigger]

Trigger --> Review[Escalation Path: Expert Review]

Review --> Close([Final Output])

Auto --> Close

style Threshold fill:#f7f9f8,stroke:#1B1917

The product roadmap needs governance

If governance is going to work in AI, it has to show up in the roadmap. That means the roadmap should not just include feature delivery. It should also include:

- behavioral guardrails

- escalation logic

- model evaluation thresholds

- human override paths

- production monitoring

Those are not secondary concerns. They are core product requirements.

An AI product that performs well in a demo but fails in production is not a successful product. It is an incomplete one.

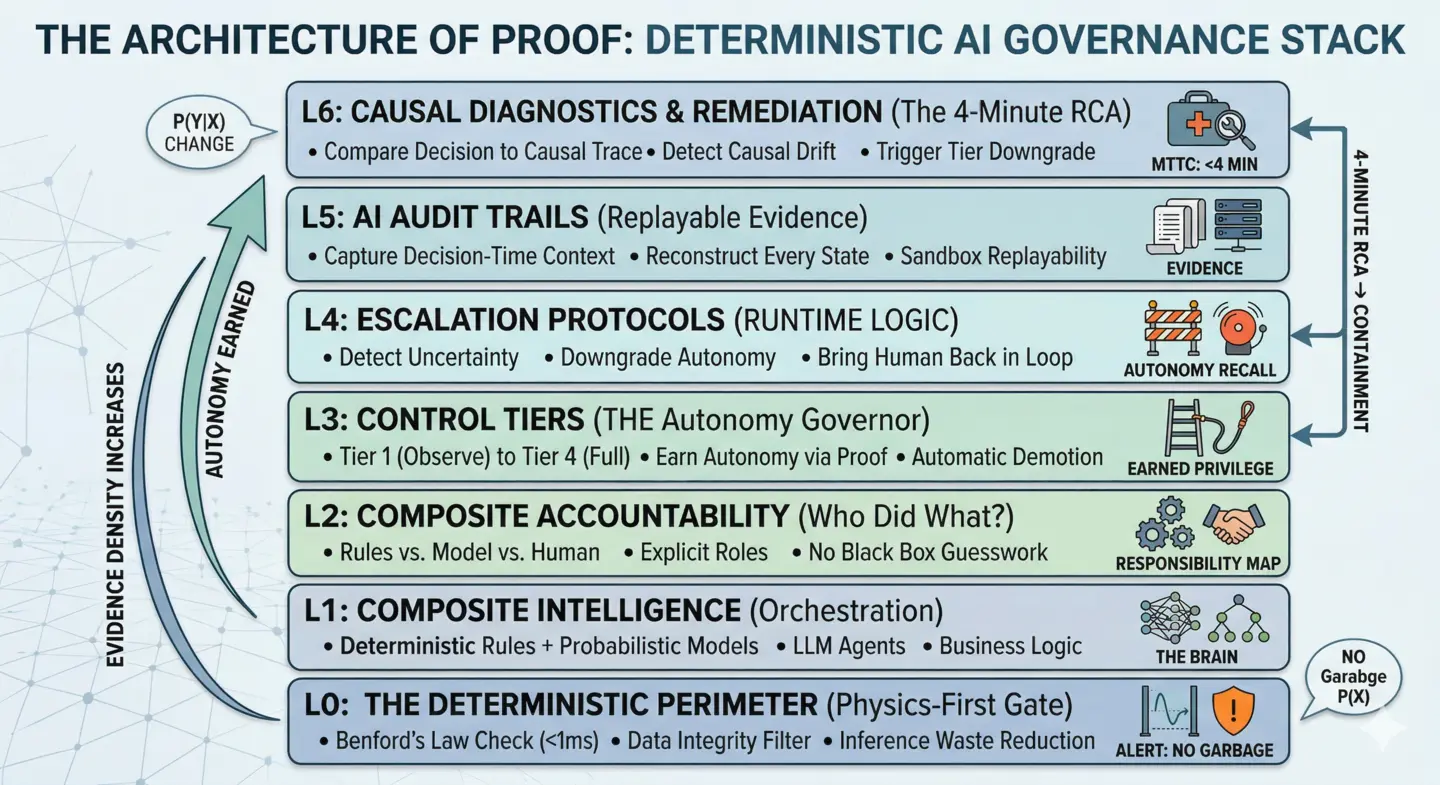

Three layers of operational governance

A useful way to think about this is through three layers:

Policy

This is the intent layer. It defines the ethical boundaries, risk appetite, and system constraints. For example, a medical AI might be prohibited from making dosage recommendations without a defined confidence threshold.

Controls

This is the implementation layer. It translates policy into product behavior, code, and system rules. Controls might include data filtering, escalation rules, or limits on what the model can output.

Production operations

This is the evidence layer. It captures what the system is actually doing in practice through monitoring, logging, incident response, and post-launch review.

These three layers only work if they are connected. Policy has to shape controls. Controls have to shape production behavior. Production evidence has to feed back into product decisions.

graph LR

P[Policy] --> C[Controls]

C --> B[Production Behavior]

B --> M[Monitoring/Evidence]

M --> F[Product Feedback]

F --> P

What good AI product managers do differently

Strong AI product managers do not think of launch as the end of the work. They think of launch as the beginning of measurement. They ask questions like:

- Is the product behaving the way we intended?

- Are users trusting the system for the right reasons?

- Are we seeing drift, failure, or unexpected override patterns?

- Do we know what happened when the system was wrong?

That is what it means to manage AI products responsibly. It is not enough to ship something that works in testing. You have to build something that can be trusted in production.

stateDiagram-v2

direction LR

[*] --> Define

Define --> Build

Build --> Test

Test --> Launch

Launch --> Monitor

Monitor --> Adjust

Adjust --> Monitor

Adjust --> Build : Major Refinement

The real test

The real test of an AI product manager is simple: If the system makes a mistake tomorrow, can you explain exactly what happened, prove why it happened, and stop it from happening again?

If the answer is no, the product is not yet governable. If the answer is yes, then you have built something more durable than a feature. You have built a system that can operate safely in the real world.

graph LR

PM[PM: Behavior & Trade-offs]

ENG[Engineering: Implementation]

RISK[Risk/Legal: Constraints]

OPS[Ops: Monitoring & Response]

PM --- ENG

PM --- RISK

PM --- OPS

ENG --- OPS

| Area | Traditional PM | AI PM |

|---|---|---|

| Scope | Features and roadmap | Features, behavior, and escalation |

| Launch | Release to users | Release plus monitoring and safety checks |

| Quality | User satisfaction | Accuracy, trust, drift, and override behavior |

| Risk | Product bugs | Product bugs plus model failure and misuse |

| Governance | Optional / late-stage | Built into the product from the start |

How does this scale?

Scaling AI requires moving from fragile prototypes to high-fidelity, autonomous operations. This is only possible when governance is treated as a core design pillar.

Final Proof Point: Every autonomous decision in your system must have a causal trace back to a design-time policy. Without this, your product is a liability, not an asset.

Further Reading

- The Difference Between Shipping AI and Operating AI ↗ — A deep dive into the operational realities of deploying AI systems at scale.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.