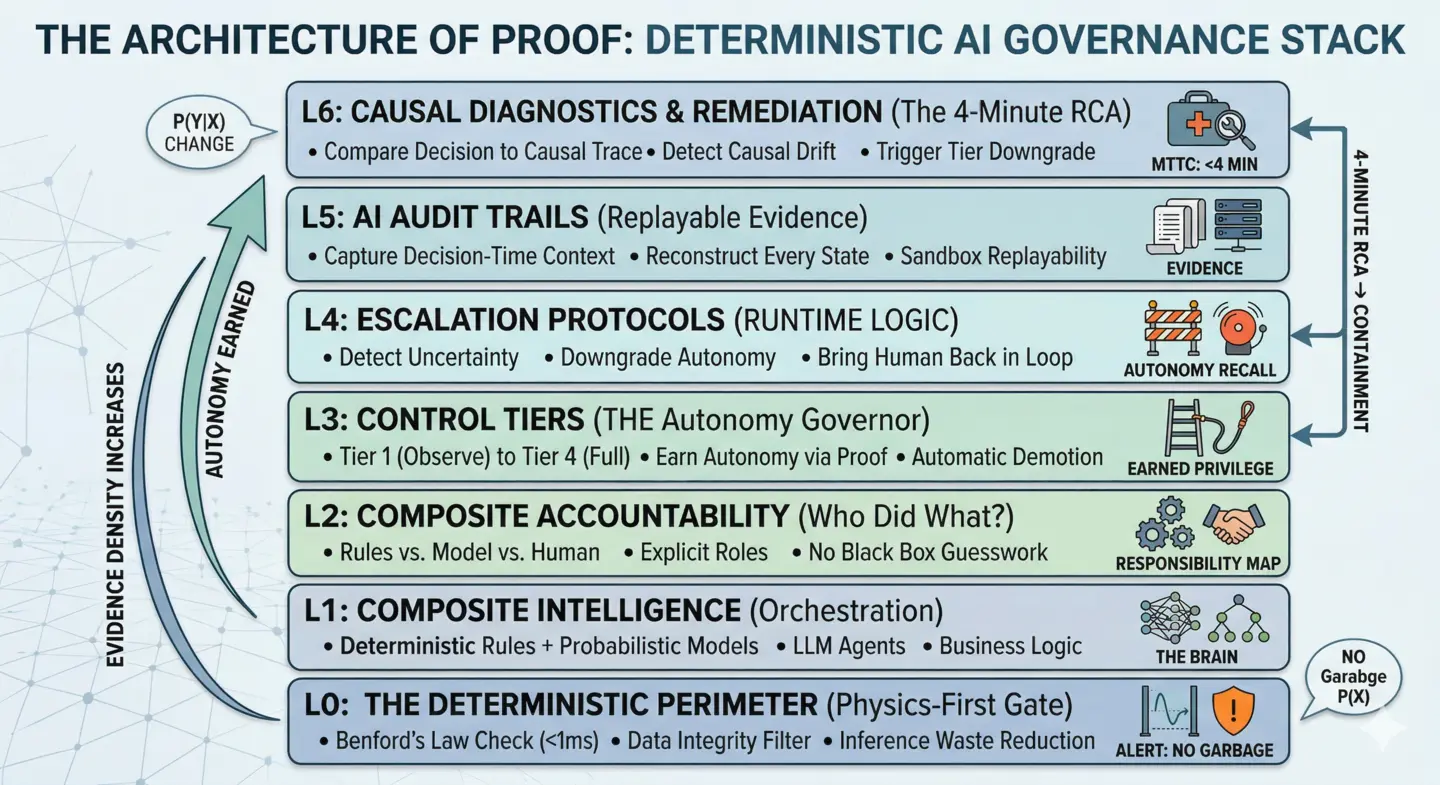

The Architecture of Proof AI Governance Framework

Welcome to the Architecture of Proof, a comprehensive AI governance lifecycle designed for the age of probabilistic systems.

This framework moves beyond "AI safety" as a vague concept and into AI control as a systems architecture. It is designed for high-stakes domains—finance, healthcare, cybersecurity, and industrial automation—where "close enough" isn't an option.

The Evolution: 4-Stage AI Maturity Model

The Architecture of Proof isn't just a stack; it’s a journey from Probabilistic Uncertainty to Deterministic Evidence. Most organizations are stuck in Stage 1 or 2. Moving between stages requires Evidence-Based Promotion.

Accuracy-focused, black-box, no audit trail. Typical of sandbox environments where speed outpaces evidence.

Can reconstruct exactly what happened using basic logging and Replayability.

Uses Control Tiers and automated escalation to govern the AI's "leash length."

The Components: The 7-Layer Architecture Stack

To move up the maturity curve, you must operationalize the core architectural layers.

Layer 0: The Deterministic Perimeter

Physics-First Pre-Inference Validation Before a single probabilistic model is invoked, the Architecture of Proof demands a "Physics Check." By using deterministic tools like Benford’s Law, we filter out synthetic or tampered data at the perimeter (<1ms). This reduces Inference Waste and ensures your models only process high-fidelity, natural data.

Layer 1: Composite Intelligence

Orchestrating Rules, Models, and Humans Learn the core architectural pattern of the framework: how to choreograph deterministic rules, statistical models, and human judgment into a single high-fidelity system.

Layer 2: Composite Accountability

Proving Each Part Did Its Job Since intelligence is composite, accountability must be too. This layer defines how to set contracts and metrics for every actor in your AI system to ensure defensible decisions.

Layer 3: Control Tiers

Designing AI Autonomy Levels Standardized levels of AI autonomy. Define exactly when your AI is allowed to act, when it must ask for help, and when it must stop to maintain human control. Understand the 4 Control Tiers.

Layer 4: Escalation Protocols

How AI Systems Ask for Help The runtime safety layer. These protocols turn anomalies into structured, safe responses, ensuring your AI knows how to escalate to humans before it breaks.

Layer 5: Audit Trails for AI

Building Replayable Systems The foundation of proof. Capture decision-time context to enable exact Replayability. Turn your system from a Black Box into a Glass Box.

Layer 6: Causal Diagnostics & Remediation

The Logic of Recovery The objective here is to move from "knowing what happened" to "fixing why it happened" during the AI Incident Golden Hour.

- The Objective: Diagnose and remediate errors rapidly using your Audit Trails.

- Key Tool: Counterfactual Testing ("What if the input had been X?").

- Governance Outcome: 4-Minute Root Cause Diagnosis (RCA).

- Metric: Mean Time to Containment (MTTC).

By comparing real-time decision data against historical Causal Traces, the system can identify Causal Drift—where the "rules of the world" have shifted—and trigger an automated Tier Downgrade before the model’s accuracy collapse impacts the business.

Why implement this Architecture?

By structuring your AI systems through these components, you transition from Black Box operations to Glass Box governance. You gain: - Traceability: Every decision can be replayed and justified. - Reliability: Physics and rules provide a safety net that models cannot bypass. - Accountability: Clear roles for humans and machines prevent "the AI did it" excuses.

This is the standard for building verifiable AI systems.

Related in this series

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.