AI Escalation Protocols: How AI Systems Ask for Help | AI Incident Response

This post is part of the Autonomy and Escalation pillar.

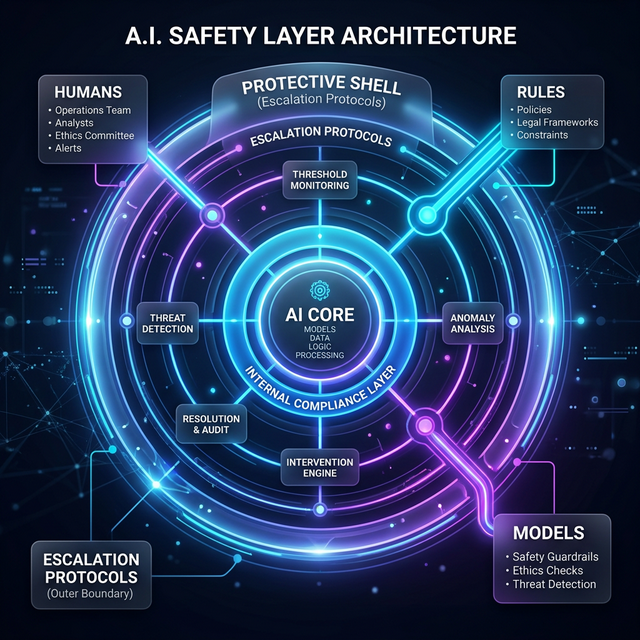

This post defines the frameworks for AI escalation protocols: the runtime logic that detects trouble, downgrades autonomy, and brings humans back into the loop before damage occurs, using the same logs and control tiers that underpin your audit trails.

The product isn't the model. The product is the choreography—and that choreography must include a plan for when things go wrong.

Safety Through Escalation

In the Control Tiers model, we assigned AI different levels of autonomy. Escalation protocols are the runtime behavior that sits on top of those tiers.

Without explicit protocols, every anomaly becomes an ad-hoc fire drill. With them, the system behaves more like a responsible colleague: it raises its hand when it’s out of its depth.

How do AI systems ask for help?

For any AI-driven workflow, you should be able to answer:

- What are we watching? (Triggers)

- What do we do first? (Automatic response)

- Who do we tell? (Escalation path)

- What’s in the packet? (Context)

The 4 Questions of Escalation

| Question | Focus | Goal |

|---|---|---|

| What are we watching? | Triggers | Real-time anomaly detection |

| What do we do first? | Auto-Response | Immediate risk mitigation (Safe Mode) |

| Who do we tell? | Routing | Mapping anomalies to clear owners |

| What’s in the packet? | Context | Enabling efficient human intervention |

1. What are we watching? (Defining Triggers)

Start by making a short list of triggers that should cause the system to pause, downgrade, or escalate.

- Data quality triggers: Spikes in missing fields or broken invariants.

- Model behavior triggers: Performance drops or calibration drift.

- Rule violation triggers: Sudden conflicts between rules and model outputs.

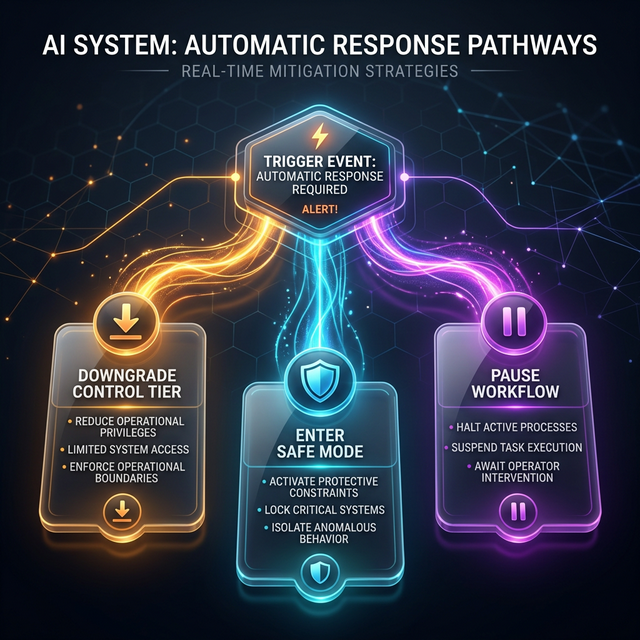

2. What do we do first? (Automatic Responses)

When a trigger fires, the system should have pre-decided moves. The key: the system doesn’t wait for a committee meeting to behave more safely.

Typical patterns include: * Downgrade the Control Tier: Switch to “recommend only.” * Enter “Safe Mode”: Stricter limits and more conservative triage. * Pause Workflow: Stop active processes until human review.

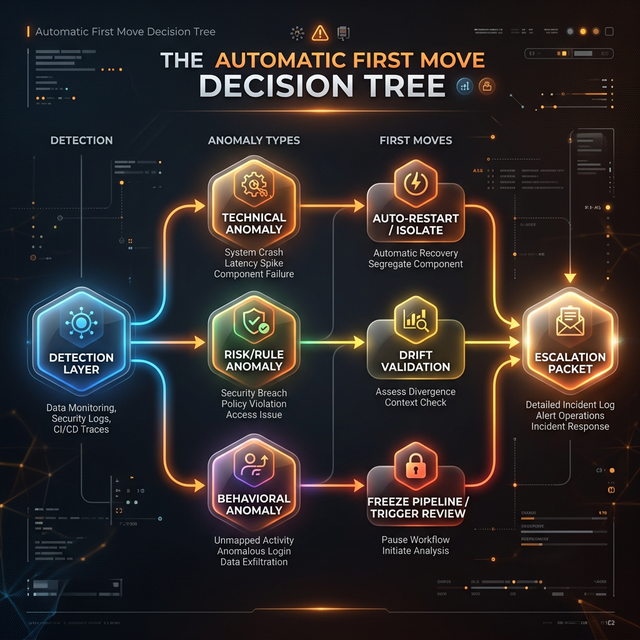

Decision Tree Analysis

For a more precise mapping of anomaly types to actions, we use a structured decision tree.

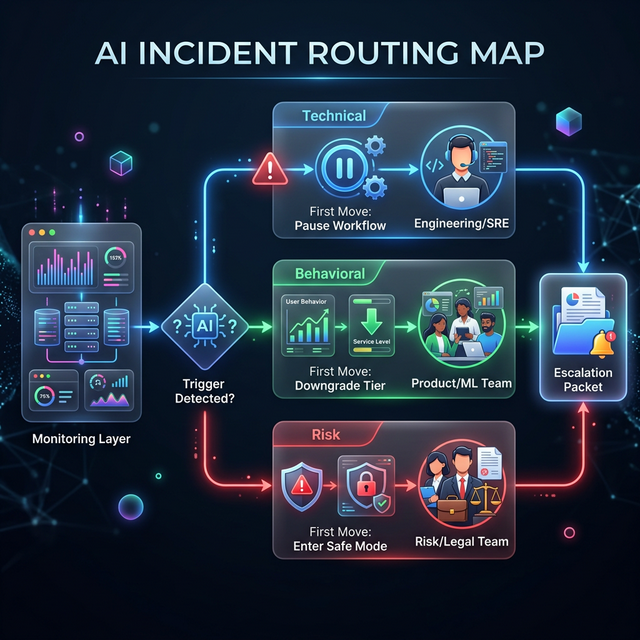

3. Who do we tell? (AI Incident Routing)

Escalation is a routing problem. To avoid "CC everyone and hope," the system must map specific anomaly types to clear owners.

- Technical Anomalies → Engineering / SRE.

- Behavioral Anomalies → Product / ML Team.

- Risk & Compliance → Risk / Legal Team.

4. What’s in the packet? (Escalation Payload)

When the system asks for help, it should send a packet of context, not a vague alert. You’re aiming for something a human can open and say, “I know where to start.”

How do escalation protocols connect to your AI architecture?

Escalation protocols are the connective tissue between the other layers of the Architecture of Proof:

- Composite Intelligence: Escalation defines when control shifts between models, rules, and humans.

- Composite Accountability: Escalations become test cases—did rules see the issue, did models behave per contract, did humans respond as expected?

- Control Tiers: Escalation is how you move between tiers at runtime. This structured request for help is the key to maintaining controlled autonomy in high-stakes environments.

How to get started with escalation protocols

For each AI-influenced workflow, ask: * What 3–5 triggers would make you want to slow down or stop automation? * What is the automatic first move when a trigger fires? * Who is the primary human this should go to? * What must be in the packet for them to be effective? * How will you test this flow before an incident?

Your system should answer these and should know how to ask for help.

Related in this series

- Designing for AI Autonomy Levels: See how escalation protocols enable transitions between control tiers.

- How to design AI Audit Trails: Learn how audit logs provide the necessary context in an escalation packet.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.