Start Here: A Practical Guide to AI Governance Frameworks | Architecture of Proof

If you landed here from a search, a LinkedIn post, or a recommendation — this page will orient you in under five minutes.

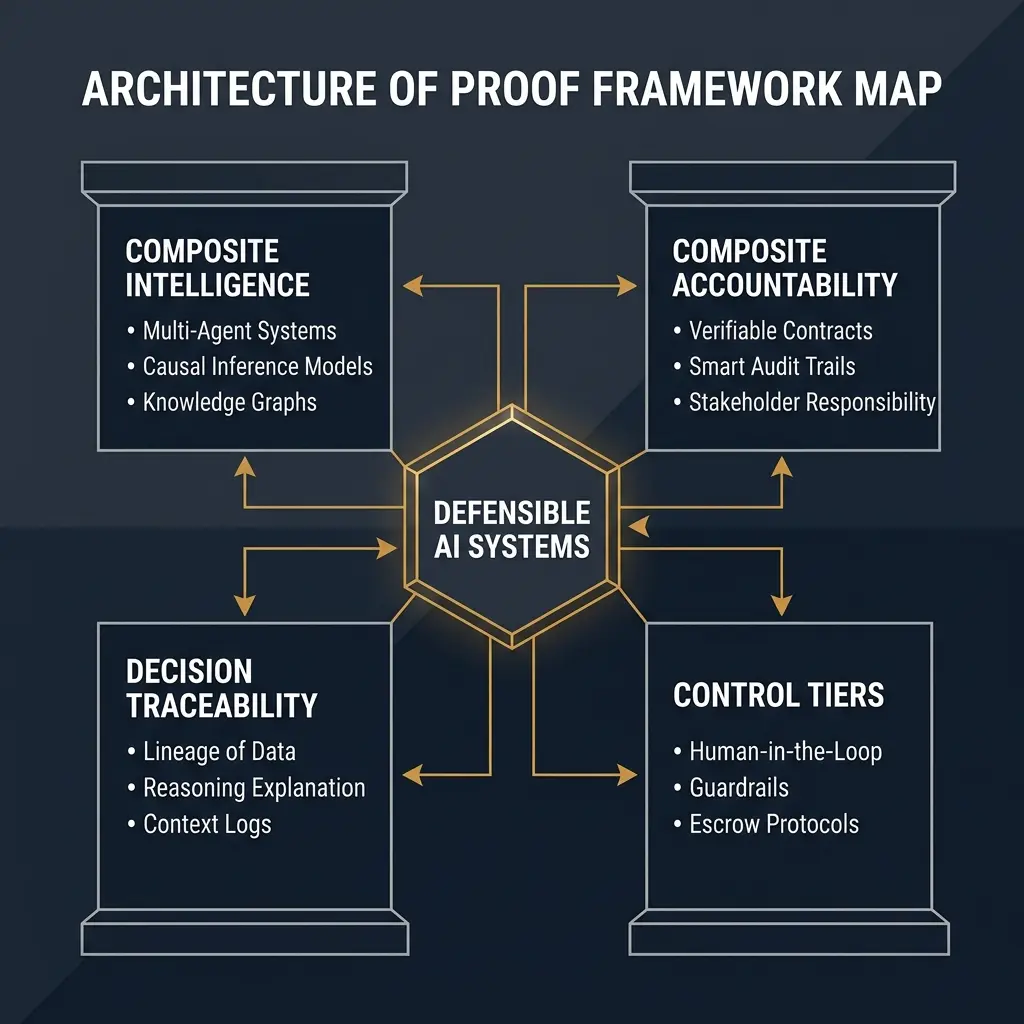

The Architecture of Proof is a framework for building AI systems that can be explained, audited, and safely placed into production. Not just systems that score well on benchmarks.

Most AI governance content falls into one of two failure modes: too abstract to act on, or too tactical to generalize. This site tries to sit precisely between those two failure modes.

Scale Safely

How do I reduce liability, lower inference costs, and earn the right to scale autonomy?

Build Proof

How do I implement Causal Traces, Benford Perimeters, and Replayable Audit Trails?

What this site is about

Modern AI systems — especially in regulated industries — face a problem that model accuracy doesn't solve.

A model can be accurate and still be ungovernable. It can perform well in testing and fail silently in production. It can optimize a metric while violating a policy that nobody encoded. And when it fails, the most common response is: "We're not sure why that happened."

That is not governance. It is hope with a dashboard.

The Architecture of Proof is built on a different premise: a system is only trustworthy if it can prove what it did, why it did it, and that it did it within the rules you set. Every component — rules, models, and humans — must have a verifiable contract. Every decision must leave a reconstructable trace.

The site covers the frameworks, patterns, and tools for building systems that meet that standard.

Who this is for

This site is built for people who are operating AI systems, not just evaluating them.

- Technical leads who need to design AI architectures that survive regulatory scrutiny.

- AI product teams building autonomous or semi-autonomous workflows in lending, fraud, claims, healthcare, or operations.

- Risk and governance functions who need to write policies that actually connect to system behavior.

- Engineering managers who need to explain to senior leadership why an AI decision was made — and prove it.

If you are a researcher building new models, this site is probably not for you. If you are deploying models into a system that affects real people and real outcomes, read on.

The core vocabulary

Five terms appear throughout this site. It is worth knowing them before reading anything else.

Composite Intelligence An AI system where rules, models, and humans each play a defined role in a single decision flow — rather than a single model doing everything.

Composite Accountability The principle that in a composite system, every actor must have a verifiable contract, and the system must be able to prove whether each part kept its contract.

Control Tiers Predefined levels of autonomy (Tier 0: Observe → Tier 3: Human Only), each with explicit permissions, preconditions, and escape routes.

Decision Traceability The ability to reconstruct a decision after the fact — including which inputs were used, which rules fired, which model scored, and who intervened.

Causal Trace A log that stores the reasoning path of a decision separately from raw data, so you can diagnose failures without re-running the whole system.

The main framework in plain English

The Architecture of Proof is organized around four questions every production AI system must be able to answer:

- Who did what? — Composite Intelligence: rules, models, and humans have defined roles.

- Can you prove it? — Composite Accountability: each role has a measurable contract.

- How far did the system go on its own? — Control Tiers: autonomy is assigned, not assumed.

- What happened and why? — Decision Traceability: decisions leave a reconstructable record.

Everything else on this site is an elaboration of one of these four questions.

90 seconds → Know your stage.

Most teams are stuck in Stage 1 forensic debt. Start here to gap-fill.

Recommended reading order

If you are new to this site, read in this order.

1. The Thesis Why Architecture of Proof? — What problem this framework is solving and why conventional AI governance fails to address it.

2. The Foundation Composite AI Architectures — How rules, models, and humans work together in a single decision flow.

3. Accountability Composite Accountability — How you make each part of that system provably responsible for its role.

4. Autonomy Control Tiers — How you design AI autonomy levels on purpose, with explicit escape routes.

5. Traceability AI Audit Trails — How you capture enough evidence to reconstruct any decision after the fact.

6. Failure Response AI Escalation Protocols — How systems ask for help, downgrade, and hand off to humans cleanly.

7. Maturity The AI Maturity Model — How to assess where your system is on the path from statistical pilot to causal traceability.

The five pillars

This site is organized around five core subjects. Each has a dedicated pillar page with definitions, models, failure modes, and links to detailed posts.

- AI Accountability Architecture — Designing systems where every component can prove its role.

- Decision Traceability — Building the evidence chain that makes decisions reconstructable.

- Autonomy and Escalation — Defining how far AI systems go and when they stop themselves.

- Governance Operating Model — Translating governance policy into operational system behavior.

- Regulated AI Implementation — Applying this framework in lending, fraud, healthcare, and claims.

$2.7M Year 1 Impact

Archaeology vs. Diagnostics.

21% Inference Savings

Killing garbage requests.

95%MTTC Reduction

4 weeks to 4 minutes.

What to do next

Read in the order above, or jump to the pillar that matches your most urgent problem.

If you want a single practical starting point: download the Readiness Checklist — a one-page diagnostic for assessing whether your current AI system meets the Architecture of Proof standard.

If you have a specific question, the Glossary defines every term used on this site with precision.

Related reading

- The AI Governance Framework: The full framework overview in one place.

- The Glossary: Precise definitions for every term used on this site.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.