Composite AI Architectures: Orchestrating Rules, Models, and Humans | AI Governance

This post is part of the AI Accountability Architecture pillar.

Learn how to build composite AI architectures that combine the strengths of deterministic rules, machine learning models, and human domain expertise.

The product isn't the model. The product is the choreography.

What are the three building blocks of composite intelligence?

Composite intelligence is built on three distinct actors: deterministic rules for compliance, statistical models for pattern recognition, and human experts for high-stakes judgment.

When you look at real AI systems in production, you see the same three actors show up over and over.

-

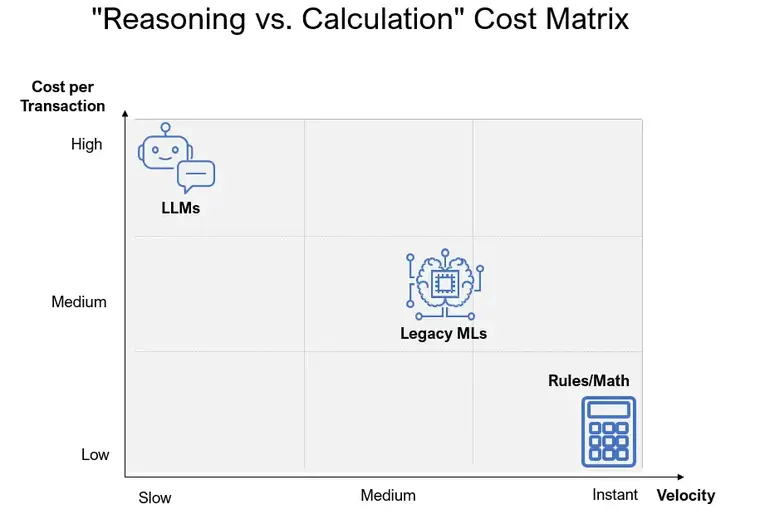

Rules (deterministic logic) Rules encode things that must always be true. They cover regulations, eligibility, SLAs, thresholds, and hard "never do X" policies. They're great at clarity and compliance and terrible at nuance or adaptation. From a PM lens, rules answer: What must this system never violate, no matter what the model thinks?

-

Models (statistical learners) Models are the pattern engines. They score risk, classify behavior, rank options, summarize messy inputs, and generate text. They're great at finding signal in noise, and bad at guarantees or explaining themselves in business terms. The PM question here is: Where do we need probabilities and patterns instead of if/else logic?

-

Humans (domain judgment) Humans handle edge cases, ethical trade-offs, messy context, and accountability. They're great at making sense of rare situations and terrible at doing the same thing 10,000 times a day. The PM question: Where do we still want a person to "sign" the decision and take responsibility?

Building Blocks Comparison

| Building Block | Nature | Strength | PM Question |

|---|---|---|---|

| Rules | Deterministic | Compliance & Safety | What must never be violated? |

| Models | Statistical | Pattern recognition | Where do we need probabilities? |

| Humans | Judgmental | Accountability & Context | Who should sign this decision? |

Composite systems aren't about picking a winner between these three. They're about assigning the right work to the right actor.

How do the orchestration patterns work?

Orchestration patterns define the flow of authority between rules, models, and humans, determining which actor filters, proposes, or approves a decision.

Instead of drawing one box labeled "AI," it's more useful to sketch the flow between rules, models, and humans. A few patterns show up a lot.

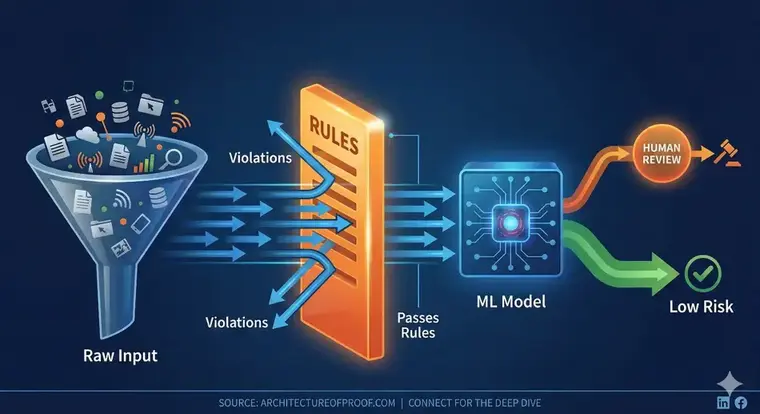

Pattern 1: Rules → Model → Human (conservative flow)

- Rules run first, enforcing hard eligibility and compliance.

- Models work on the "gray area" that passes the rules, scoring risk or predicting outcomes.

- Humans see only the riskiest or most ambiguous subset.

Think of this as: filter with rules, rank with models, escalate to humans.

Pattern 2: Model → Rules → Human (model proposes, rules constrain)

- A model proposes an action: approve, block, route, summarize.

- Rules clip or veto the proposal if it violates policy, limits, or law.

- Humans handle exceptions or high-impact, high-risk cases.

This is "model as first draft, rules as safety net, humans as backstop."

Pattern 3: Rules ↔ Model (mutual supervision)

- Rules decide when to even involve a model (e.g., "if the case falls in this band, ask the model").

- Over time, repeated model behavior that seems stable gets promoted into explicit rules.

Here, rules and models are peers: rules keep the model in a lane; the model suggests new lanes.

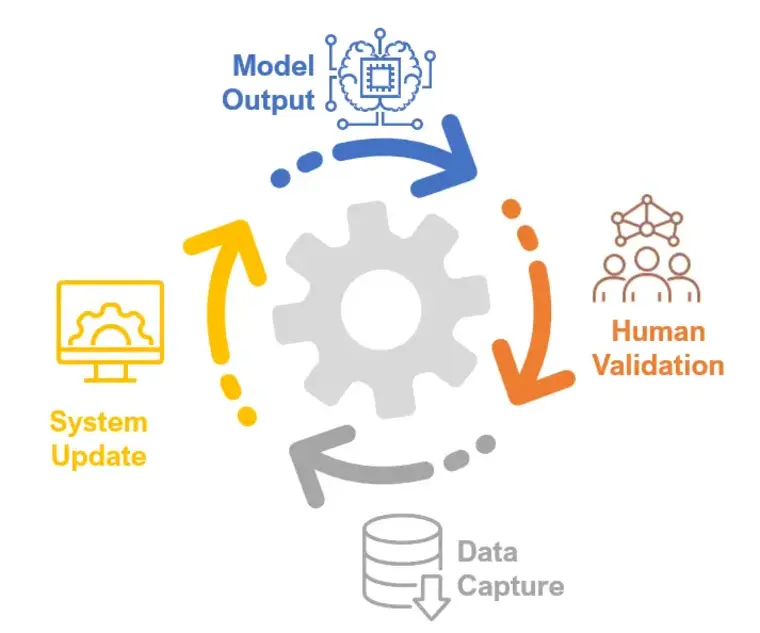

Pattern 4: Human-in-the-loop checkpoints

- At deliberate points in the flow, a human must confirm, override, or label the model's output.

- Those overrides and labels aren't just "fixes"; they are training signals and policy updates.

Well-designed systems treat humans as teachers and editors, not as unpaid safety nets.

Five Examples of Composite AI Systems In Product Management

To make this concrete, here are examples of how product teams already orchestrate rules, models, and humans in real products.

1. Customer support copilots in CRMs

- Models: Understand intent, summarize threads, and draft responses.

- Rules: Enforce SLAs, refund policies, legal language, and entitlement by tier.

- Humans: Agents choose from suggestions, edit replies, and escalate sensitive cases.

A typical flow:

- The model reads a multi-email thread and drafts a reply.

- Rules check whether the proposed action (like a refund or credit) is allowed for this customer and region.

- If it passes, the agent sees the draft, tweaks it, and sends; if it doesn't, the agent gets a constrained template or an escalation path.

The value isn't "put an LLM in the inbox." It's a composite flow that reduces handle time without breaking policy or tone.

2. Fraud detection and transaction monitoring

- Models: Score each transaction or account for fraud risk, detect anomalies, and cluster suspicious behavior.

- Rules: Apply sanctions checks, velocity limits, device and location rules, and "always block / always allow" patterns.

- Humans: Fraud analysts investigate alerts, mark false positives, and tune strategies.

A typical flow:

- Simple rules auto-block clearly bad behavior (stolen cards on watchlists, obvious mule patterns).

- The model scores everything else; medium-risk transactions might get step-up authentication, high-risk ones go into an analyst queue.

- Analysts clear or confirm cases; their decisions feed back into both rules (new hard patterns) and model training (better risk scores).

Here, composite intelligence keeps customer experience tolerable while still adapting to evolving fraud.

3. Lending and credit decisioning

- Models: Estimate probability of default, income stability, and cashflow health.

- Rules: Encode credit policy, regulatory constraints, product eligibility, pricing rules, and exposure caps.

- Humans: Underwriters handle complex businesses, exceptions, and policy interpretation.

A typical flow for a small-business loan:

- Rules validate basics: identity checks, required documents, banned geos/industries, hard KYC/KYB constraints.

- Models score the application: risk, affordability, maybe fraud likelihood.

- A decision engine maps score + policy into "auto-approve," "approve with conditions," or "decline."

- Certain bands—like borderline risk or high-exposure deals—always go to an underwriter, who can override with justification.

- Outcomes (repayment, delinquency, overrides) feed back to update both models and policy.

The "AI feature" here is not just the score; it's the orchestration that lets you automate low-risk cases safely while concentrating human judgment where it matters most.

4. In-product recommendation systems

- Models: Drive segmentation, ranking, personalization, and demand forecasting.

- Rules: Enforce inventory limits, contract terms, regulatory constraints, geography, and business priorities.

- Humans: PMs and marketers set objectives, define guardrails, and approve campaigns.

A typical flow:

- Models predict what each user is most likely to click, buy, or succeed with.

- Rules filter out items that can't be shown (out of stock, not licensed in the region, violates customer's contract, fails a compliance check).

- The system serves a ranked, filtered list in the product.

- Humans review experiment results, adjust loss functions (e.g., optimize for margin vs. conversion), and add new constraints based on strategy.

Composite design ensures recommendations are not only "smart," but also feasible, compliant, and aligned with business goals.

5. Decision-intelligence dashboards and ops control towers

- Models: Forecast demand, detect anomalies, and optimize staffing, routing, or pricing.

- Rules: Encode safety limits, labor laws, contractual obligations, and operational constraints.

- Humans: Planners and operators choose among model-generated scenarios, override plans, and promote recurring decisions into new policy.

A typical flow:

- Models forecast the next few days of demand or risk.

- An optimizer proposes several staffing or routing plans under those forecasts.

- Rules throw out any plan that violates constraints (e.g., max overtime, SLA obligations, capacity).

- Operators see 2–3 viable options, make tradeoffs, and pick one—or adjust and save a custom variant.

- Over time, frequently chosen patterns become standardized playbooks or new rules.

The composite system here turns raw predictions into concrete, safe options humans can actually implement.

How should PMs design composite systems?

Product managers design composite systems by choreographing the interaction between probabilistic AI and deterministic guardrails to ensure business outcomes are both smart and safe.

Once you start thinking in composite terms, your job as a PM shifts from "where can we add AI?" to "how do we choreograph rules, models, and humans so each is doing the work it's best at?"

For any AI-heavy flow, you can ask:

- What work must be handled by rules because it's about law, policy, or non-negotiable constraints?

- Where do we truly need models because pattern recognition or forecasting beats simple logic?

- Where do we still want humans to sign the decision, even if the model is confident?

- What are the downgrade paths when metrics go sideways—how do we move from automate → recommend → assist → manual only?

- How will we capture overrides, outcomes, and feedback so the system gets better over time instead of just accumulating exceptions?

AI products won't win because they picked a slightly better model or wrote a cleverer prompt. They'll win because they treat intelligence as a system—rules, models, and humans working together in one coherent flow.

For a deeper look at managing these systems, see our frameworks for Composite Accountability and AI Escalation Protocols.

Related in this series

- The 4 Tiers of AI Autonomy: Learn how to manage control within a composite system.

- Proving AI Accountability: See how to hold every actor in a composite flow responsible.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.