AI Accountability Architecture: Designing Systems That Can Prove What They Did | AI Governance

Most AI systems are built to perform. Fewer are built to prove.

There is a difference. A system that performs can produce accurate outputs. A system that proves can show, after the fact, that every part of the decision-making process behaved within its intended boundaries — and identify, with precision, which part failed when something went wrong.

AI accountability architecture is the design discipline for the second category.

What AI accountability architecture means

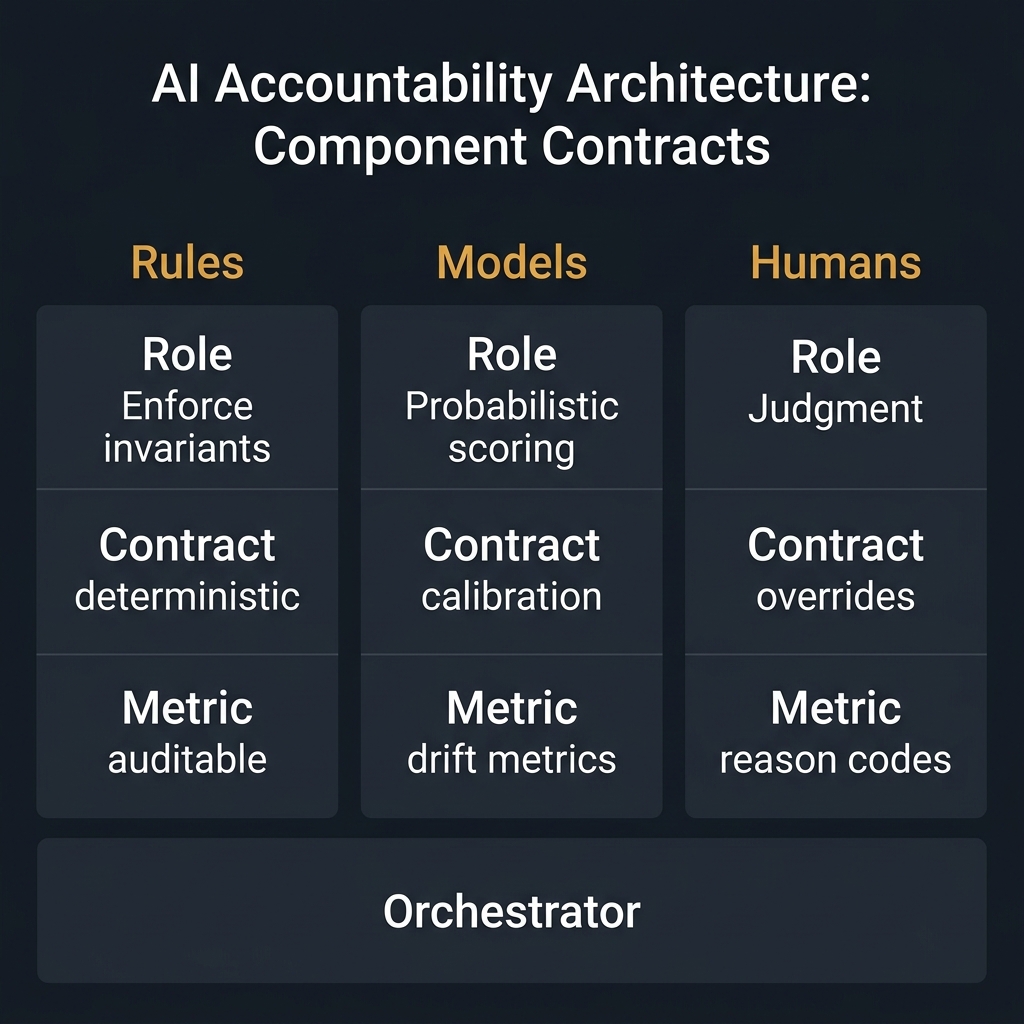

AI accountability architecture is the structural design of a system so that every component — rules, models, and humans — has an explicit role, a verifiable contract, and a measurable local metric.

It is not a monitoring dashboard. It is not a fairness audit. It is the underlying design that makes those things meaningful.

Without it, you have a system that can tell you an outcome was bad. With it, you can tell which component produced the bad outcome, why, and what needs to change.

This applies specifically to composite AI systems — systems where rules, models, and humans each play a role in a single decision flow. If your system is a single model making a single call, accountability is simpler. But most production AI systems are composite. And composite systems require composite accountability.

Why standard AI governance misses this

Most AI governance conversations focus on the wrong layer.

They address model risk (is the model accurate?), data governance (is the training data clean?), or output monitoring (are outcomes fair?). These are real concerns. They are not accountability.

Accountability requires a finer resolution. It requires being able to say:

- "The rule layer allowed this input when it should not have."

- "The model scored this case correctly given its inputs, but the inputs were wrong."

- "The human override was documented and within policy, but the threshold they were working with was misspecified."

None of that is visible from an outcome metric alone. It requires system design that makes the decision path inspectable — not just the decision.

The three structural requirements

An AI accountability architecture requires three things at every layer of the system.

1. Explicit roles

Every component must have a defined function that is distinct from the other components.

Rules enforce invariants. They define what is never allowed, what is always required, and when a human must be involved. Models provide probabilistic estimates — they rank, score, or classify within the space the rules permit. Humans handle judgment: edge cases, exceptions, and decisions that require context the system cannot encode.

If these roles overlap or blur, accountability collapses. If the model is also making eligibility decisions that should belong to rules, you cannot isolate a failure. If humans are routinely overriding the model without a structured process, you cannot separate human error from model error.

Roles must be explicit, documented, and enforced in the system design.

2. Verifiable contracts

Roles are not enough. Each component needs a contract — a testable statement of what correct behavior looks like.

For rules: "No application with fewer than 12 months in business passes to the model." That is a contract. Either the rule fired or it did not.

For models: "Given valid inputs, the model ranks higher-risk cases above lower-risk cases on held-out data at least 92% of the time." That is a contract. It can be measured.

For humans: "Reviewers who override a model recommendation must select a reason code from this list and attach supporting evidence." That is a contract. It can be audited.

Without contracts, postmortems produce opinions. With contracts, they produce findings.

3. Local metrics

Each component's contract must be connected to a metric that can be measured in production — not just in testing.

For rules: coverage (what percentage of traffic passed through critical rules), violation rate, and consistency across channels.

For models: discriminative power, calibration over time, and drift by segment.

For humans: override rate, override direction, and latency.

| Component | Contract | Local Metric |

|---|---|---|

| Rules | Catch all policy violations | Violation catch-rate, coverage |

| Models | Correct ranking and calibration | AUC, drift, calibration curves |

| Humans | Documented, policy-consistent overrides | Override rate, reason code distribution |

| Orchestrator | Correct routing decisions | Routing accuracy, SLA compliance |

When something goes wrong, the first question is not "what happened to the outcome?" It is "which metric moved, and in which component?"

The four failure modes this architecture prevents

Failure mode 1: Diffuse blame. When a system has no explicit component accountability, failures produce debates, not diagnoses. Accountability architecture ends those debates by providing a structural answer: which contract was not met, and by which part.

Failure mode 2: Silent violations. Rules and models can behave incorrectly without a visible outcome change — especially in the short term. Local metrics catch violations that aggregate metrics miss.

Failure mode 3: Governance theater. Policies that sit in documents but do not connect to system behavior. Accountability architecture requires that every policy statement has a corresponding rule, metric, or human procedure that can be measured.

Failure mode 4: Postmortem paralysis. Incidents where the team cannot reconstruct what happened because the decision path was not logged. Accountability architecture makes the decision path a first-class artifact of the system.

Common failure modes in design

Rolling a model into a rule. When a model score is used as a direct eligibility threshold (e.g., "approve if score > 700"), you have effectively made the model a rule — but without the determinism, consistency, or auditability that rules require.

Treating human overrides as exceptions. Overrides are data. If they are not logged, categorized, and analyzed, you lose the most important signal about where the system's contracts are misspecified.

Conflating accuracy with accountability. A model that is accurate is not necessarily accountable. Accuracy measures the outcome distribution. Accountability measures whether the decision process was valid, regardless of whether the outcome was good.

How to implement this

Start with the composite you already have.

For each major AI-influenced workflow:

- Write down what rules, models, and humans are each responsible for. If you cannot write it down, the roles are not explicit yet.

- Write one contract per component. A single sentence is enough to start.

- Identify the metric that would tell you, in production, whether each contract is being kept.

- Instrument the decision path: log which rules fired, which model produced which output, and what any human did.

- Build postmortem templates that ask which contract was violated — not just what went wrong.

None of this requires new infrastructure in phase one. It requires precision in design.

Related posts in this pillar

- Composite AI Architectures: How rules, models, and humans share the work of a decision.

- Composite Accountability: The detailed implementation guide for assigning contracts and metrics to each component.

- The Glass Box Manifesto: The case for moving from predictive faith to deterministic proof.

Downloadable resource

The Architecture of Proof Readiness Checklist — A one-page diagnostic for assessing whether your current AI system meets the accountability standard.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.