Governance Operating Model: Translating AI Policy Into System Behavior | AI Governance

Most organizations have an AI governance policy. A smaller number have one that connects to anything running in production.

The gap between policy and production is where most AI governance failures originate. A policy that sits in a document does not change system behavior. A risk appetite statement that has never been translated into a rule, a threshold, or a contract is not operational governance. It is aspiration.

The governance operating model is the structure that closes that gap.

What a governance operating model is

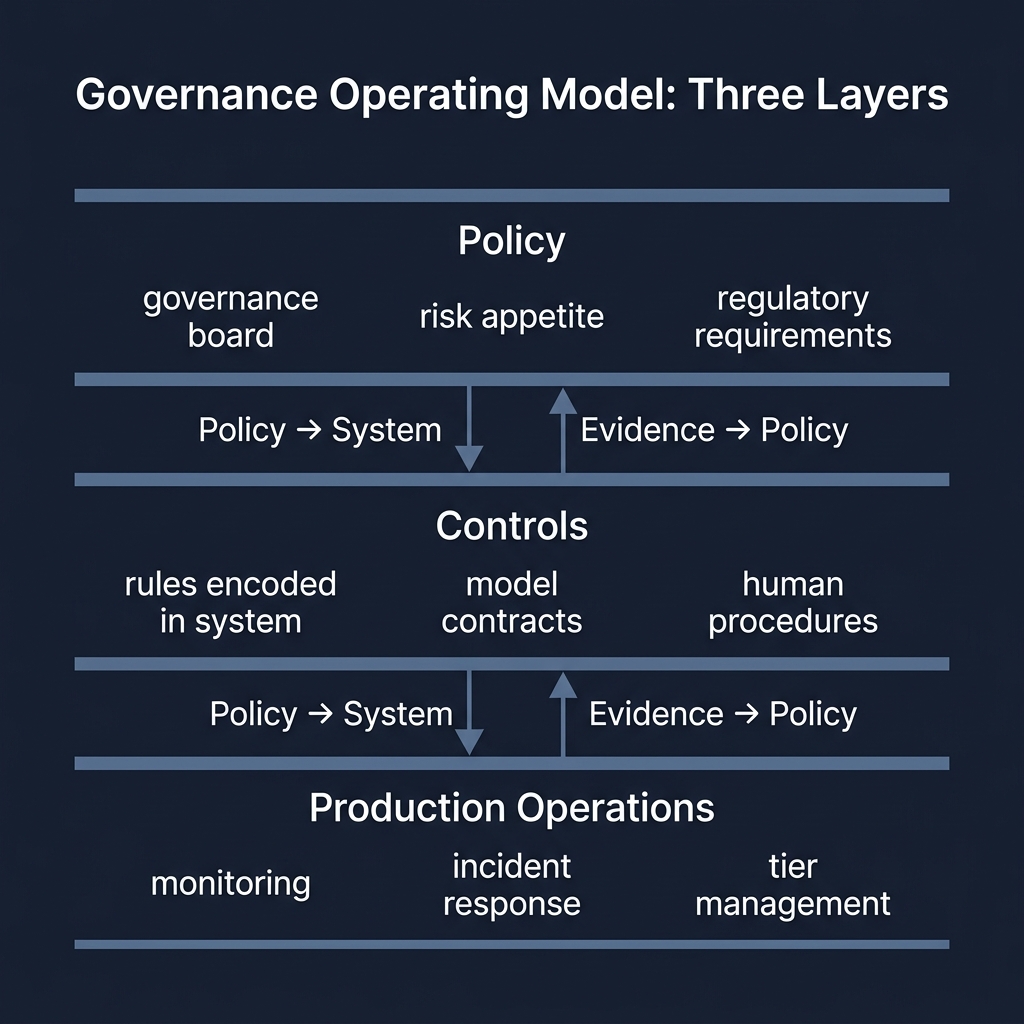

An AI governance operating model is the operational structure that translates governance policy into measurable system controls — and routes production evidence back to inform policy decisions.

It has three layers.

The first is policy: risk appetite, regulatory requirements, ethical boundaries, and board-level governance principles. This layer defines what is required and what is prohibited.

The second is controls: the rules encoded in the system, the contracts assigned to each model, and the procedures that govern human decisions. This layer implements the policy as operational behavior.

The third is production operations: monitoring, incident response, tier management, and audit functions. This layer generates the evidence that tells the organization whether the controls are working.

The model works only if all three layers are connected — and if evidence flows bidirectionally: policy shapes controls, and production evidence shapes policy.

Why most operating models fail

The most common failure is not missing policy. It is missing translation.

A policy says: "Models must not discriminate on protected characteristics." That is a principle. For it to be operational, someone must translate it into a specific rule — a list of prohibited inputs, a fairness metric with a defined threshold, a human review procedure for borderline cases, and a monitoring process that catches violations before they appear in an audit.

If that translation step is missing, the policy exists and the violation exists simultaneously.

The second common failure is unidirectional flow. Policy is written and pushed into the system. Production evidence is collected but never reviewed by the people who write policy. This creates governance theater: documents that are updated annually, systems that have drifted from them, and audits that discover the gap.

A functioning operating model routes evidence back. Production anomalies update thresholds. Incident postmortems change rules. Model review findings revise contracts. The loop closes.

| Activity | Typical candidates |

|---|---|

| Model performance monitoring | ML Engineering, Model Risk, Operations |

| Rule updates | Compliance, Engineering, Product |

| Tier change decisions | Risk, Engineering, Leadership |

| Incident response | Engineering, Operations, Compliance |

| Audit preparation | Compliance, Model Risk, Engineering |

The RACI does not need to be elaborate. It needs to answer one question for each activity: who is responsible for ensuring this happens?

3. Evidence routing

Production evidence must reach the people who can act on it.

Model risk reviewers need access to validation reports and drift metrics — not just model performance dashboards.

Policy owners need access to incident postmortems and violation logs — not just aggregate outcome metrics.

Governance boards need periodic evidence that controls are functioning — not just assurance that processes exist.

Without structured evidence routing, governance becomes a documentation exercise rather than an operational function.

Common failure modes

Governance by compliance. The operating model exists to satisfy auditors, not to improve system behavior. Evidence is collected for reports, not for decisions. Postmortems produce documentation rather than system changes.

Missing translation layer. Policy and controls exist in parallel but are not connected. The policy says one thing; the system does another; nobody is responsible for closing the gap.

Single point of governance ownership. A single compliance or risk function owns all governance activities. Engineering teams treat governance as someone else's problem. The result is that governance activities happen in documents and monitoring dashboards — not in system design.

Stale RACI. The ownership model was defined at deployment and has not been updated as the system has changed, new models have been added, and team structures have shifted.

Related posts in this pillar

- Governance Operating Model: From Policy to Execution: How to turn abstract governance principles into operational system behavior.

- The AI Incident Golden Hour: How governance operating models are tested under pressure — the mechanics of rapid incident diagnosis and containment.

- The AI Governance Playbook: The implementation guide for moving from a governance policy document to a functioning operational structure.

- The AI Maturity Model: How to assess whether your current governance operating model is running at a statistical pilot level or a causal traceability level.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.