Autonomy and Escalation: Designing AI Systems That Know When to Stop | AI Governance

"Keep a human in the loop" is not a design specification. It is a sentiment.

It does not tell you which decisions require human review. It does not say what threshold triggers escalation. It does not specify what happens when the human queue overflows, or when the system is performing well and human review is creating more errors than it prevents.

Autonomy and escalation design replaces that sentiment with structure: explicit tiers, explicit preconditions, and explicit pathways for moving between them — up and down.

The core problem with undesigned autonomy

AI systems do not arrive with a fixed level of autonomy. They are deployed at one level, and then their autonomy creeps — through shortcuts, through informal approvals, through "we'll add the review step back later" that never happens.

The result is a system operating at a tier of autonomy that was never formally approved, with circuit breakers that were never designed, and escalation paths that were never tested.

This is not a hypothetical. It is the most common governance failure mode in production AI.

The Architecture of Proof approach is to make autonomy a first-class design decision — assigned deliberately, changed only with evidence, and bounded by automatic downgrade paths.

The four tiers of autonomy

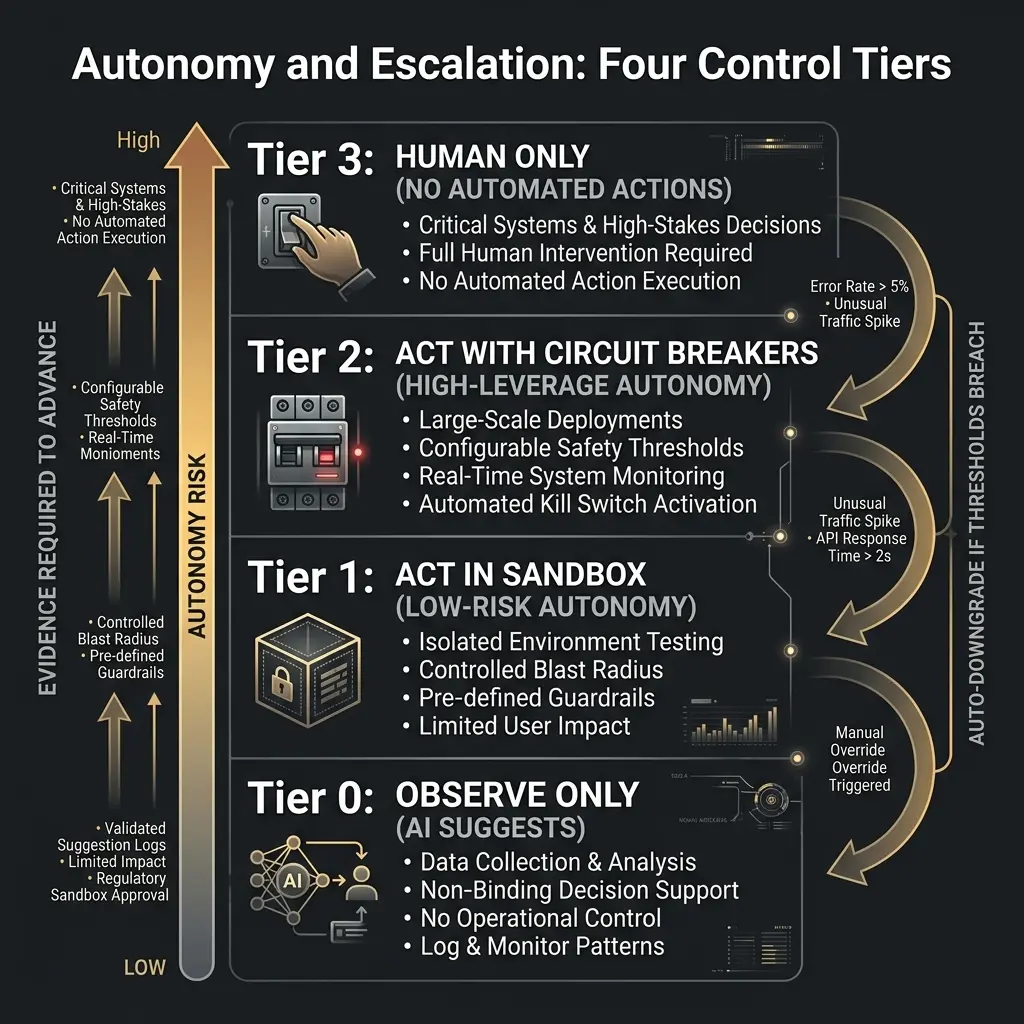

The Architecture of Proof uses four control tiers. Every AI-influenced workflow should be assigned to one of them.

Tier 0 — Observe Only

The system analyzes, scores, and suggests. Humans make every consequential decision.

This tier is appropriate for: new deployments before sufficient production data exists; domains where regulatory requirements mandate human approval; situations where human judgment genuinely cannot be replicated by the system.

Tier 1 — Act in the Sandbox

The system takes low-risk, reversible actions autonomously. Humans review aggregate outcomes, not individual decisions.

This tier requires: demonstrated stable performance in a pilot; fully reversible actions within a defined scope; automatic revert conditions that the system enforces itself.

Tier 2 — Act with Circuit Breakers

The system takes high-leverage, bounded actions autonomously. Humans handle escalations and own incident response.

This tier requires: strong calibration and low error rates demonstrated in production; tested circuit breakers with documented auto-downgrade behavior; signed-off risk appetite and incident playbooks.

Tier 3 — Human Only

The system provides analysis and recommendations. All consequential decisions require human sign-off.

This tier applies to: clinical decisions with direct patient impact; large financial transactions above defined thresholds; any domain where the regulatory framework prohibits automated decisions.

Advancing tiers requires evidence, not confidence

Tier upgrades are governance events — not configuration changes.

The principle is simple: no evidence, no tier upgrade. Moving from Tier 0 to Tier 1 requires evidence that the system performs as designed on a held-out data set, that the pilot produced no unexpected behavior, and that the revert conditions have been tested. Moving from Tier 1 to Tier 2 requires more: demonstrated production calibration, tested circuit breakers, and formal risk sign-off.

Evidence requirements should be written down in advance, agreed across stakeholders, and evaluated at scheduled intervals — not assessed informally by the team that built the system.

| Transition | Evidence Required |

|---|---|

| Tier 0 → Tier 1 | Stable pilot performance; reversibility tested; no policy violations |

| Tier 1 → Tier 2 | Production calibration; circuit breakers tested; risk sign-off |

| Tier 2 → Tier 3 | (Scale back) regulatory mandate, incident, or loss of confidence |

Escalation thresholds: the three types

Escalation is triggered by one of three signal types.

Performance thresholds. When a model's error rate, calibration, or drift exceeds a defined limit, the system escalates automatically. The threshold is set in advance, not in response to an incident.

Confidence signals. When the model's confidence score falls below a defined level — indicating the case is outside the distribution it was trained on — the system routes to human review rather than producing a low-confidence automated output.

Policy conditions. When a rule fires that defines a mandatory human touchpoint — for example, any transaction above a certain value, or any case in a specific regulatory category — the escalation is deterministic, not probabilistic.

All three types should be explicitly designed, not discovered in production.

Downgrade paths: the other direction

Tier advancement is only half the design. The other half is the pathway back down.

A circuit breaker is an automatic downgrade trigger: a hard-coded condition that moves the system to a lower tier when a metric breaches a threshold. Circuit breakers are what separate a system that degrades gracefully from one that fails silently.

- ● Defined in advance with explicit thresholds

- ● Tested before going live

- ● Logged when triggered

- ● Reviewed after each trigger for permanency audit

A system that has never triggered a circuit breaker is either operating flawlessly or has circuit breakers that are set wrong.

Common failure modes

Tier drift. Autonomy increases informally — through "temporary" workarounds that become permanent — without the evidence requirements being met.

Escalation queue overflow. The system escalates more volume than humans can review, creating a backlog that effectively bypasses the escalation path.

Untested circuit breakers. Downgrade conditions exist on paper but have never been tested in a realistic scenario. The first real test is the first real incident.

Tier assignment by confidence, not evidence. Teams move to higher tiers because they feel good about model performance, not because they have measured it against a defined bar.

Related posts in this pillar

- Control Tiers for AI-enabled Processes: The detailed guide to designing each tier — what permissions it grants, what preconditions it requires, and how to implement automatic downgrade logic.

- AI Escalation Protocols: How systems ask for help cleanly — the mechanics of escalation at runtime, including handoff design and queue management.

- Stage 4 Maturity: Causal Traces and the 4-Minute RCA: How mature systems diagnose escalation failures in under five minutes using causal trace logs.

Downloadable resource

The Architecture of Proof Readiness Checklist — Includes the autonomy tier assignment criteria and circuit breaker design sections.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.