Decision Traceability: Building the Evidence Chain for AI Systems | AI Governance

Most AI systems are good at making decisions. Very few are good at proving them.

The difference matters the moment something goes wrong: a customer files a complaint, a regulator asks for an explanation, an internal audit begins, or a model behavior shifts in production without a visible cause. At that point, a system that can reconstruct what happened is worth more than one that was simply accurate.

Decision traceability is the design discipline for building the first kind of system.

What decision traceability means

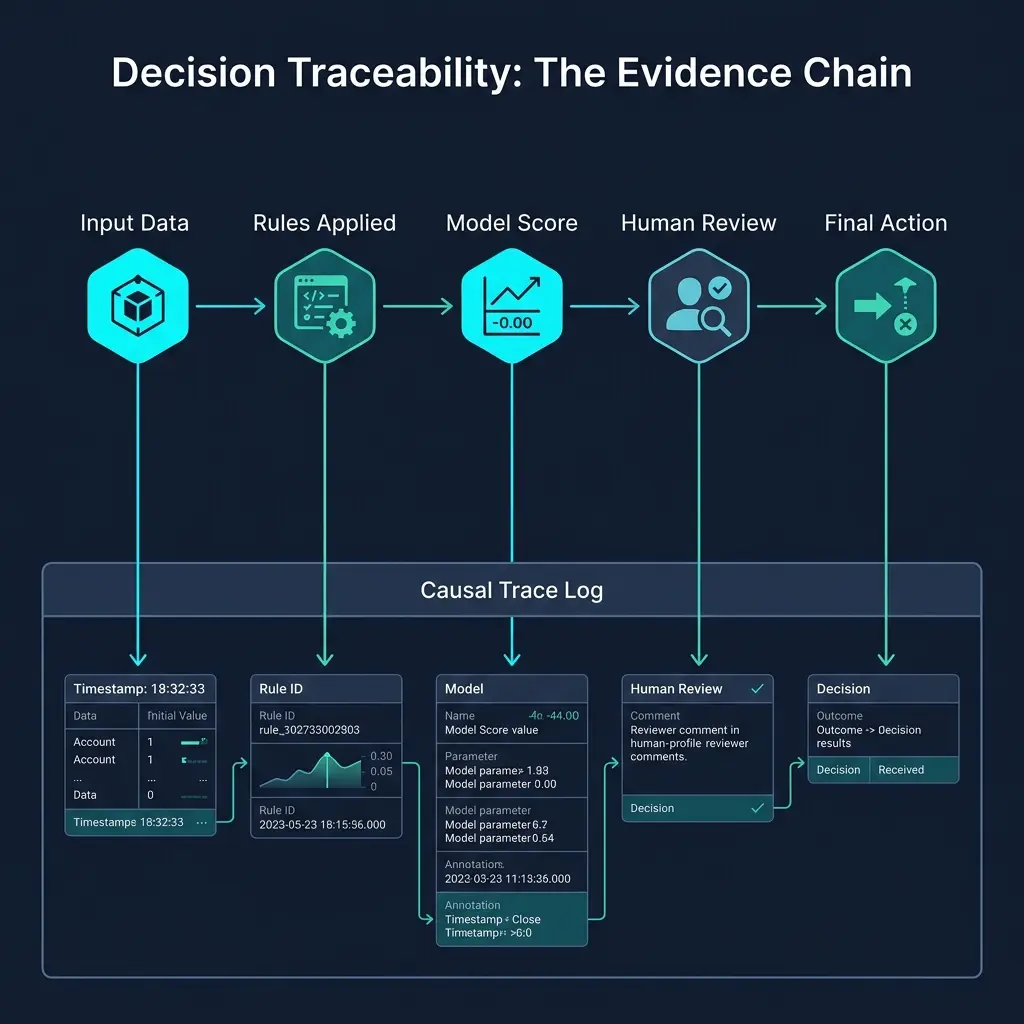

Decision traceability is the ability to reconstruct a specific AI decision after the fact — including which inputs were used, which rules fired, which model produced which output, and whether any human intervened.

It is not the same as logging. A log records that something happened. A trace records what the system knew, what it did, and why — in enough detail that an engineer, auditor, or model risk reviewer can replay the decision independently, without access to the original runtime state.

Traceability is what makes the difference between an incident that takes four minutes to diagnose and one that takes four weeks.

What counts as evidence

Not every log entry constitutes evidence. Evidence, in the governance sense, must be:

Complete. The record must capture every component that contributed to the decision — not just the model output, but the inputs, the rules that ran, and the human actions that followed.

Immutable. The record must not be alterable after the fact. If a trace can be modified, it is not evidence.

Linked. The trace must connect the decision to its eventual outcome. A decision record with no outcome linkage cannot answer the question that matters most: was this decision defensible given what happened next?

Structured. Unstructured logs can be searched. They cannot be analyzed at scale or compelled as evidence in a regulatory context. A structured trace schema — defined in advance — is what separates a log from an audit trail.

Where decision logs should live

Decision traces should be treated as a separate, first-class data artifact — not derived from operational logs, not bundled into model telemetry, and not stored in the same system that serves the model.

Three principles govern storage:

Separation. The causal trace (the reasoning path) should be stored separately from the raw data that fed the decision. This allows the reasoning to be reviewed without re-running the model.

Retention alignment. The retention period for decision traces should match the regulatory and contractual requirements of the domain — typically 5–7 years for lending decisions, longer for clinical applications.

Access control. Not everyone who can query production data should be able to query decision traces. Access should be gated to incident review, audit, and model risk functions — not open to the full engineering organization.

Which decisions need traceability

Not every automated action requires a full decision trace. A routing decision for a low-value, reversible action carries different requirements than a credit approval or a medical recommendation.

A practical framework for prioritization:

| Decision Type | Traceability Requirement |

|---|---|

| High-stakes, irreversible (credit, clinical, fraud block) | Full trace: inputs, rules, model, human, outcome |

| Medium-stakes, reviewable (routing, escalation, pricing) | Partial trace: decision path + outcome linkage |

| Low-stakes, reversible (UI personalization, suggestions) | Minimal trace: decision + outcome signal |

| Human-only decisions | Override record + reason code |

When in doubt, trace more than you think you need. Regulatory expectations tend to expand, and retroactive trace reconstruction is expensive or impossible.

How teams prove a decision was defensible

A defensible decision is not one that produced a good outcome. It is one that was made correctly, given what the system knew at the time and the rules it was operating under.

Three questions frame defensibility:

Was the input valid? The decision trace must show that inputs passed the validation and cleansing rules before the model was called. If the model produced an incorrect output, the first question is always whether the inputs were valid.

Were the rules followed? The trace must show which rules fired and which passed. A rule that should have blocked a decision but did not is a different failure mode from a model that scored incorrectly.

Was the human process followed? Where humans were involved, the trace must show what they saw, what they chose, and what reason code or justification was recorded. An override without documentation is not defensible.

If all three are satisfied, the decision was defensible — regardless of outcome.

Common failure modes

Logging only the output. Many systems log the final decision but not the path that led to it. This makes postmortems impossible and audits painful.

Tracing the model but not the rules. Rule behavior is often assumed to be correct and left unlogged. In practice, missing or misconfigured rules are one of the most common sources of incorrect decisions.

Linking decisions to raw outcomes but not causal outcomes. A decision marked "approved" with no link to what happened after approval cannot answer the question that matters.

Using unstructured logs as a substitute for traces. Log search is not evidence reconstruction. Structured traces are.

Related posts in this pillar

- AI Audit Trails: The detailed guide to building replayable audit logs for high-stakes AI systems.

- The Cost of Proof: Why moving to defensible ROI requires traceability as a design requirement, not an afterthought.

- Benford's Law as a Security Perimeter: How statistical validation at the input layer protects the evidence chain from garbage-in failures.

Downloadable resource

The Architecture of Proof Readiness Checklist — Includes a section on decision traceability requirements and the four questions your system must be able to answer.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.