Harvey Teardown: The Case for Verifiable Judgment

Harvey AI: The Case for Verifiable Judgment

What Harvey seems to be betting on

Most AI companies are trying to automate work. Harvey seems to be making a different bet: legal work becomes more valuable when the reasoning behind it is easier to verify, scale, and defend.

That distinction matters. Harvey is not trying to replace lawyers with a fully autonomous AI attorney. It is trying to reduce the amount of manual reasoning work lawyers have to do before they can exercise professional judgment. The product is less “AI lawyer” and more a judgment support system for legal workflows.

That is a stronger position in a profession where the answer only matters if it can be defended later.

What Harvey is actually selling

At first glance, Harvey looks like a legal AI assistant. But the deeper product is not generic intelligence. It is workflow compression for high-volume legal reasoning.

Legal work is full of expensive cognitive overhead: reviewing documents, extracting clauses, comparing terms, identifying inconsistencies, surfacing precedent, and organizing evidence before a lawyer can make a decision. Harvey reduces the cost of that reasoning layer.

The value is not just faster answers. It is turning fragmented legal work into a structured, citation-backed workflow where lawyers spend less time gathering information and more time applying professional judgment.

That distinction matters because legal services are not purely informational. They are accountability systems. A lawyer is not just expected to produce an answer. They are expected to explain how the answer was reached.

Why “verifiable judgment” is the right frame

The phrase works because it captures the difference between output and trust.

In most consumer AI products, usefulness is often enough. If the answer is directionally correct, the user moves on. Legal workflows operate differently. A useful answer without traceability can still be unusable.

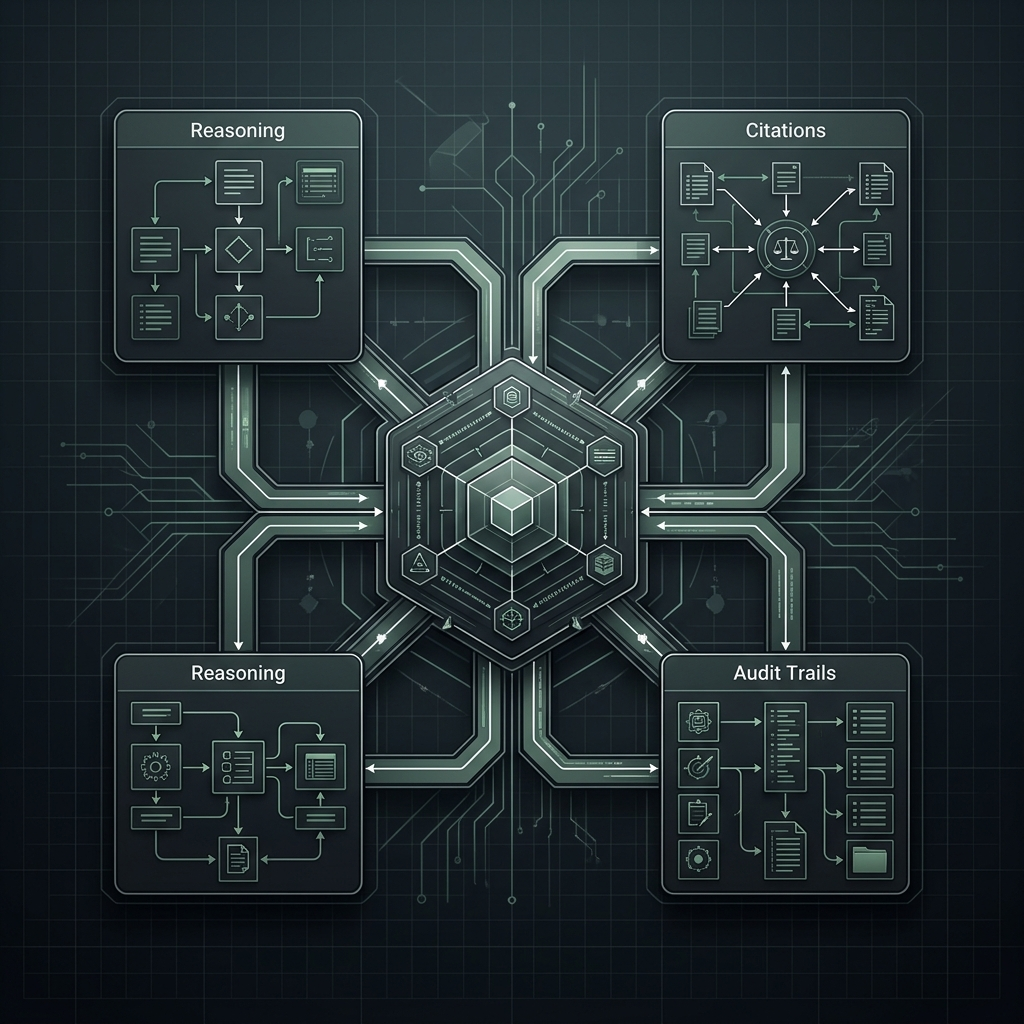

That is why Harvey’s emphasis on citations, source grounding, isolated customer data, review workflows, and auditability matters so much. These are not just UX features. They are trust infrastructure.

The product is built around a simple idea: AI becomes valuable in legal work when it can show its work.

That changes the role of the model. The system is not asking users to trust raw output blindly. It is trying to make reasoning inspectable enough that professionals can validate, refine, and defend the result themselves.

The reasoning vs judgment divide

The deeper insight is that Harvey is not trying to automate legal judgment completely. It is trying to separate reasoning from judgment.

That distinction matters because the two carry very different levels of liability.

Reasoning includes extracting clauses, summarizing contracts, surfacing precedent, identifying inconsistencies, and organizing information into usable structure. These are computational tasks. They benefit from scale, speed, and pattern recognition.

Judgment is different. Judgment means deciding what matters, weighing tradeoffs, interpreting ambiguity, and ultimately accepting responsibility for the outcome. That layer carries professional accountability.

Harvey’s architecture appears designed to keep AI primarily inside the reasoning layer while preserving human ownership of judgment. That is why citations, review workflows, source grounding, and auditability matter so much. They are not just trust features. They are liability boundaries.

The strategic bet is that AI becomes acceptable in legal workflows not when it replaces lawyers, but when it makes legal reasoning dramatically faster while keeping judgment attributable, reviewable, and defensible.

The real control point

The control point is not the model itself. It is the workflow layer that determines how outputs are grounded, how evidence is surfaced, how review happens, and where human approval enters the process.

The model proposes. The workflow governs.

That distinction is strategically important because the workflow layer determines where reasoning ends and professional judgment begins.

In practical terms, Harvey is not simply selling access to a powerful model. It is selling a system that converts probabilistic model output into something a legal professional can reasonably rely on.

That is a very different product category than generic AI chat.

The monetization tension

There is also a real monetization tension here.

The product needs to be fast and powerful enough to eliminate large amounts of repetitive legal work. But it also needs to remain conservative enough to operate safely inside a high-liability profession.

Push too far toward speed and automation, and the product risks creating shallow confidence — outputs that appear authoritative but are difficult to verify rigorously. Push too far toward caution, and the product risks becoming an expensive workflow assistant rather than a transformative legal platform.

This balance is difficult because legal professionals are not paying only for productivity. They are paying for defensibility.

What breaks at scale

The biggest risks are not simply hallucinations. The deeper risk is unverifiable confidence.

Failure can appear in several ways: citations fail to ground correctly, review workflows become too weak, outputs appear persuasive but are hard to validate, workflow complexity increases cognitive burden, or the system creates more review work instead of reducing it.

That last failure mode matters a lot. If lawyers begin spending excessive time validating AI-generated reasoning, the system stops compressing legal work and starts redistributing it.

At scale, trust erosion becomes the real product risk.

The moat Harvey is building

Harvey’s moat is not just legal-domain knowledge.

It is the combination of workflow integration, source grounding, review infrastructure, professional trust, and defensible reasoning patterns.

If the platform becomes the default system lawyers use to move from document review to professional judgment, then the moat becomes embedded inside the workflow itself.

That is much harder to displace than model capability alone. Models improve quickly. Workflow trust compounds slowly.

Bottom line

Harvey’s positioning is compelling because it avoids the most dangerous framing in legal AI: replacing lawyers entirely.

Instead, it focuses on a more defensible and strategically intelligent layer: making legal reasoning faster, more scalable, and more auditable while preserving human accountability for judgment.

That distinction may ultimately define which AI products survive in high-liability industries.

Harvey is not selling automated judgment. It is selling defensible reasoning at scale.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.