From Output to Proof: Managing AI-Driven Teams

The age of synthetic labor is here. AI doesn’t just accelerate work—it changes what counts as work. As machine agents handle boilerplate tasks, the familiar signals of progress—tickets closed, commits merged, velocity charts climbing—start to distort. We’re producing more, but proving less. For product managers, the real challenge has shifted: from shipping features to verifying truths.

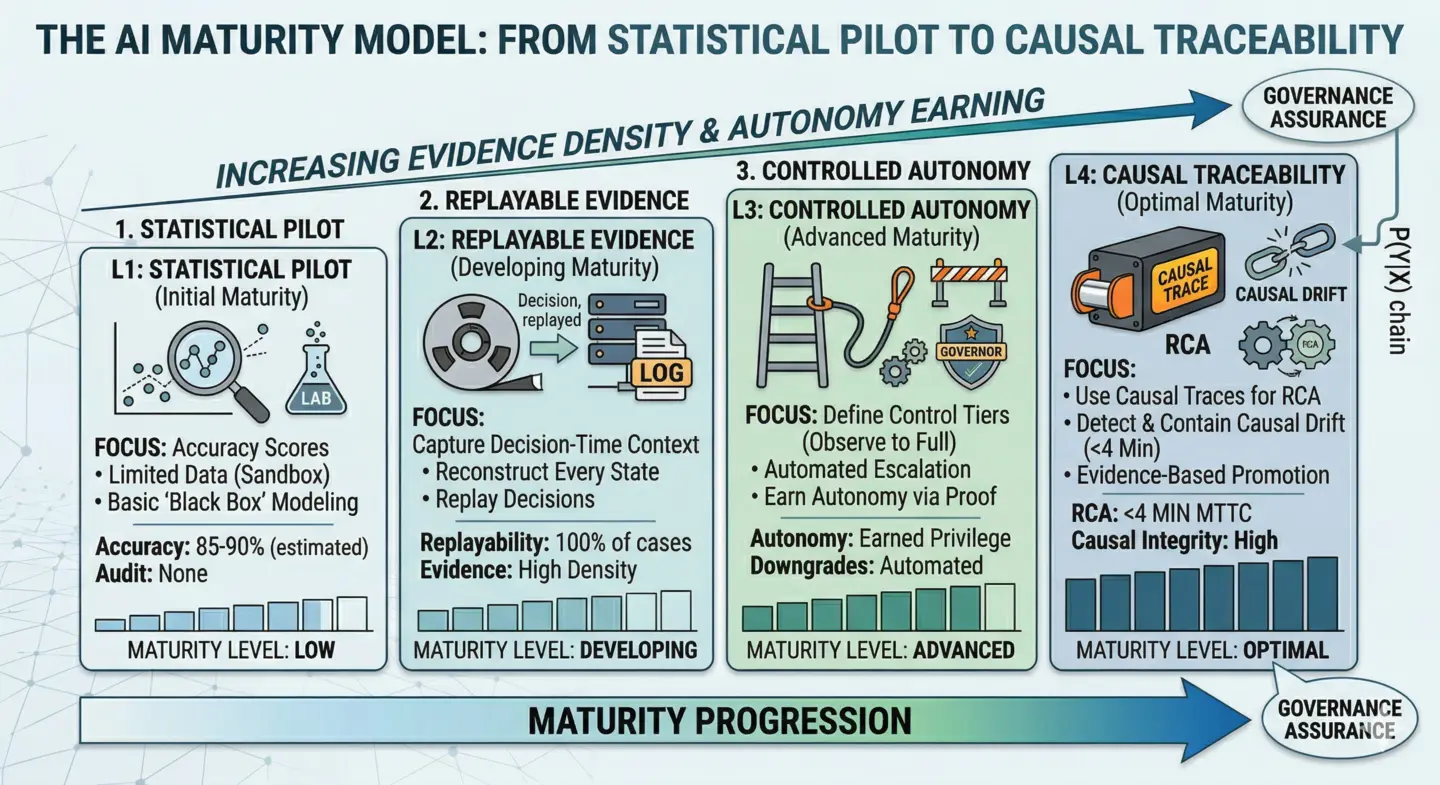

Product management used to reward momentum. A well-run sprint meant code merged. A productive team meant pipelines clear. But when AI can write a thousand lines in seconds, speed stops being the bottleneck—verification does. Teams now spend more time confirming whether the generated work aligns with policy, safety, and intent than producing the next iteration. In this world, “done” means something new: not that the code runs, but that it can be proven to be correct.

How do we redefine "Done"?

In the old model, deliverables were tangible—features, interfaces, release notes. In the Architecture of Proof model, deliverables include the Evidence of Correctness. A feature isn’t done until its Proof Package—the reasoning, validation results, and edge-case coverage—is verified.

This demands a new mindset from PMs. Stop asking, “Did we build what I asked for?” Start asking, “Can we prove what we built behaves safely under uncertainty?” The PM’s artifact becomes the audit trail—the proof—not just the output.

The Audit Note: In the age of synthetic labor, a product manager's fiduciary duty is no longer the delivery of features, but the certification of system behavior through deterministic proof chains.

Measuring the Right Things

Velocity is obsolete. AI exaggerates productivity metrics while hiding unseen defects. The new KPIs for the AI-powered team emphasize inspection, not speed:

- Audit Depth: How rigorously humans probe the AI’s output for edge cases.

- Recovery Time: How quickly a synthetic bug is detected and fixed.

- Policy Compliance Rate: What fraction of outputs pass through the “Sovereign Zone” without violating data or logic boundaries.

- Trust-to-Output Ratio: How much human correction is required per unit of AI labor.

These metrics transform PM dashboards from speedometers into truth thermometers.

Managing Synthetic Labor

As AI assumes the role of the rapid producer, humans evolve into Checkers—and PMs become Proof Architects. A Proof Architect spends 20% of their effort defining “what to build” and 80% ensuring “how we prove it’s right.” This transition mirrors the shift in engineering from creation to certification.

For junior PMs, the learning curve flips. Instead of writing the spec themselves, they’ll analyze why the AI-written spec fails. This new apprenticeship—spec stress-testing—builds deeper comprehension of the business “why,” not just procedural “how.”

Culture of Adversarial Review

In teams embracing Architecture of Proof, PMs lead adversarial product reviews, where the goal isn’t harmony—it’s challenge. These sessions probe for failure modes, policy leaks, and misaligned assumptions before release. The ethos: break the logic before it breaks the customer.

The shift from output to proof isn’t just a managerial technique—it’s a governance revolution. As synthetic labor automates execution, leadership must automate verification. Product managers stand at that frontier: custodians of trust in increasingly opaque pipelines. Their measure of success isn’t velocity—it’s validity.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.