Perplexity Teardown: The Search for Verification

Most AI products optimize for speed. Perplexity AI optimizes for speed you can check.

That difference changes the product.

In a world where large language models can generate fluent answers instantly, usefulness is no longer the bar. The real question is whether the system can produce an answer and show why it should be trusted. That shift turns citations from a feature into a requirement—and verification into the core user experience.

Why does verification matter?

The failure mode of most AI systems is not that they are slow. It is that they are confident without being grounded.

You see it in three common ways:

- Answers that sound authoritative but are partially or entirely incorrect

- Information that is outdated but presented as current

- Citations that exist, but don’t actually support the claim

These are not edge cases. They are structural outcomes of systems optimized for fluency over verification.

Perplexity AI is built around a different premise: answers should be verifiable by default. That means evidence is not buried or optional—it is inline, immediate, and inseparable from the answer itself.

Managing the trade-off: speed vs. citation integrity

The core tension in AI search is structural:

- Speed comes from generating answers quickly

- Trust comes from grounding those answers in real sources

- Doing both at once is hard

Most systems resolve this by prioritizing one side:

- Chatbots optimize for speed, then attach citations afterward (if at all)

- Traditional search optimizes for evidence, but pushes synthesis onto the user

Perplexity AI tries to collapse that trade-off. The goal is not speed or verification—it is speed with built-in verification.

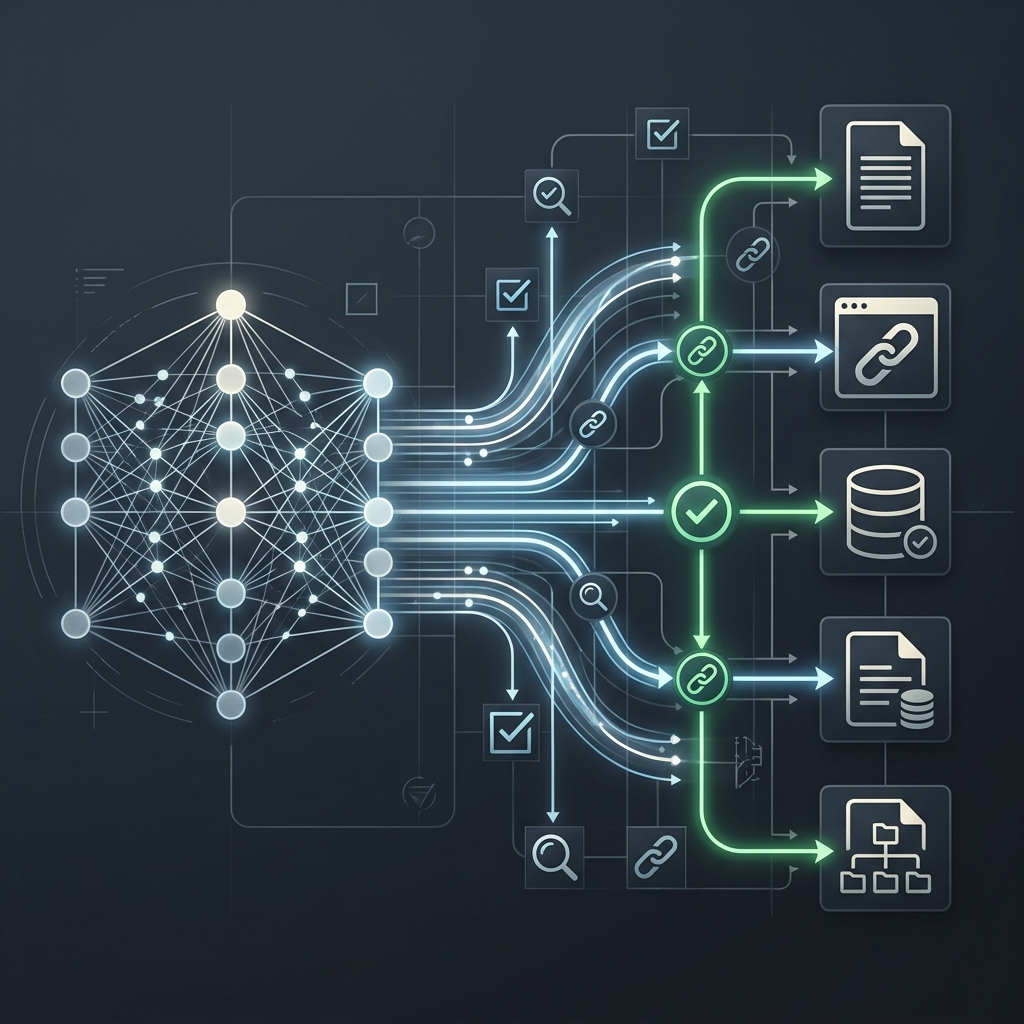

That only works if the system is designed so that retrieval and generation happen as a single loop, not as separate steps.

This is where Vespa.ai becomes essential.

Instead of:

- Generate an answer

- Go find sources to support it

Perplexity’s system effectively does:

- Retrieve high-quality, relevant sources in real time

- Rank and filter them aggressively for precision

- Generate the answer conditioned on those sources

- Attach citations that are already part of the reasoning process

This changes the economics of latency and trust:

- Latency is controlled upstream: fast retrieval + ranking ensures generation doesn’t stall

- Citation integrity is preserved downstream: because the model is grounded during generation, not patched afterward

- Precision is prioritized over breadth: fewer, better sources instead of many weak ones

The result is a system where:

- citations are not decorative—they are structural

- speed does not come at the cost of grounding

- and verification does not feel like extra work for the user

Another way to see it:

The faster the retrieval layer, the less the model has to “guess.” The less the model has to guess, the more the citations actually mean something.

That is the real balancing act. Speed is achieved not by skipping verification, but by making verification fast enough to be part of the answer itself.

The hidden architecture behind trust

This is where Vespa.ai becomes critical—not as infrastructure, but as an enabler of product behavior.

Vespa allows retrieval, ranking, and inference to happen in the same loop, in real time, at scale. That matters because verification is not a UI problem. It is a systems problem.

For citations to be meaningful:

- The system must retrieve the right sources, not just any sources

- It must rank them with enough precision to support specific claims

- It must do this fast enough that the experience still feels immediate

If retrieval is weak, citations become cosmetic. If latency is too high, verification breaks the experience. Vespa helps resolve that tension by making real-time, high-precision retrieval feasible within the product’s latency budget.

In that sense, it doesn’t just “power search.” It enables a category of systems where evidence-backed answers can exist without trade-offs in responsiveness.

Why this is hard to replicate

This trade-off is easy to describe and difficult to execute.

To make it work, the system needs:

- Continuously updated indexing (so sources are fresh)

- Hybrid search (text + vector) to find relevant evidence quickly

- Low-latency ranking and filtering to maintain precision

- Tight integration with generation so citations are intrinsic

That combination is what systems like Vespa.ai enable—and why this is not just a UX choice, but an architectural one.

The product lesson

The deeper lesson here is architectural: trust is not a UI feature—it is a system property.

If you want users to trust AI outputs, you need to design for verification from the ground up:

- Verification must be default, not optional

- Evidence must be inline, not buried

- Retrieval must optimize for precision, not just recall

- Ranking must be selective enough to support specific claims

- Latency budgets must include proof, not just generation

This is what distinguishes a chatbot from a retrieval-and-verification system. The model generates the answer, but the retrieval stack determines whether that answer is credible.

The point of the product

Perplexity AI is not just trying to answer questions. It is trying to make answers feel evidence-backed rather than guessed.

That requires more than a good model. It requires a system where:

- retrieval is fast

- ranking is precise

- and verification is built into the interaction itself

Vespa.ai is part of the machinery that makes that possible—not visible to users, but essential to whether the experience holds up under scrutiny.

What happens next

If this model works, “answers with citations” will not remain a differentiator for long. It will become table stakes.

The competition will shift to harder questions:

- Whose sources are better?

- How well does the system synthesize across conflicting evidence?

- Can it show not just sources, but reasoning?

- Does it help users judge quality, not just access links?

In that world, verification is no longer enough. The next frontier is judgment.

The real insight

The core shift is simple but consequential:

AI answers are easy. Answers you can trust are engineered.

And that trust is not created by the answer alone. It is created by the system that proves the answer is worth trusting.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.