Writing Proof-Oriented Product Requirements in a Multi-Agent World

The Shift Most PMs Have Not Internalized Yet

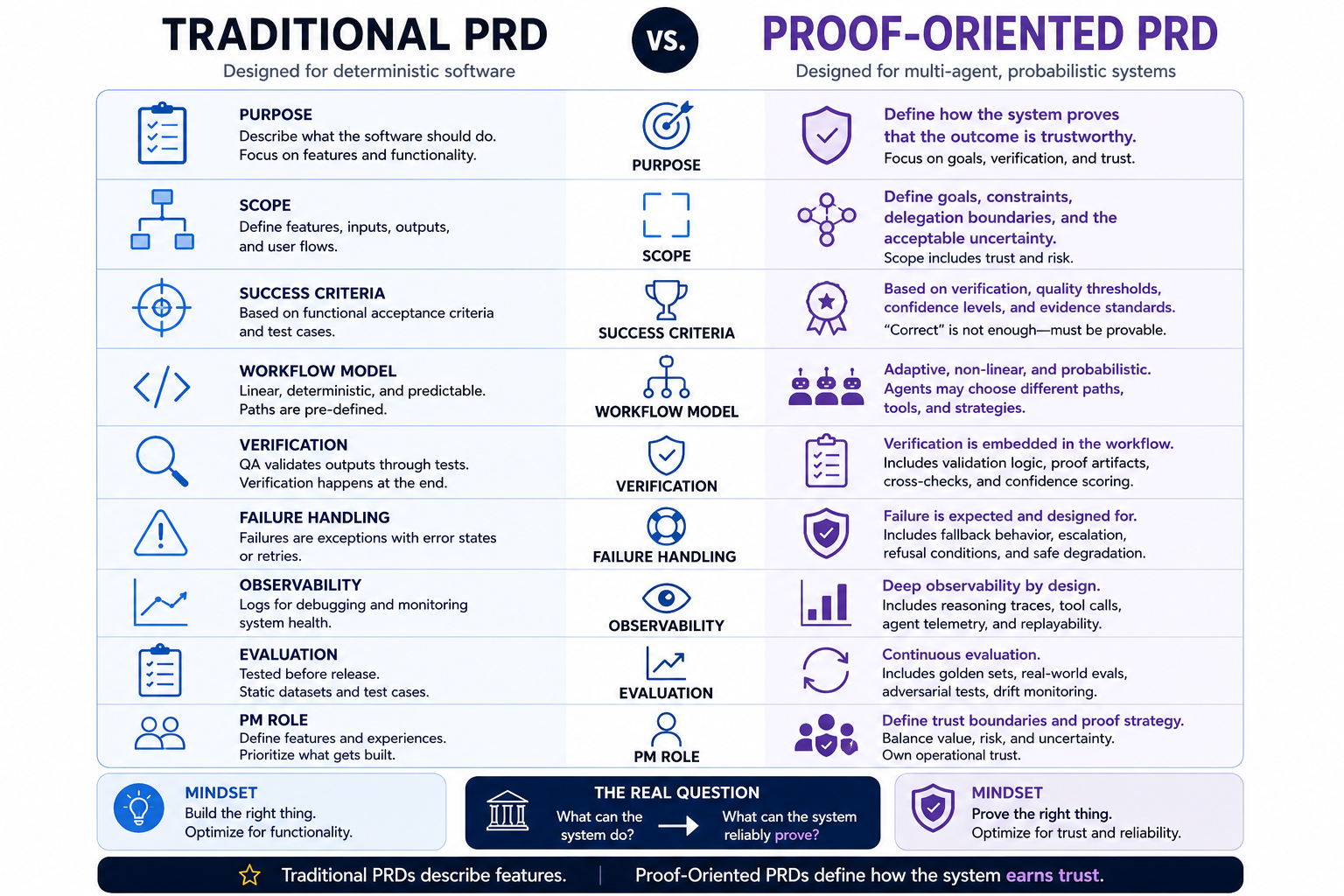

Traditional PRDs were designed for deterministic software. Agentic systems break that assumption completely.

In traditional software development, product requirements were written around a relatively stable mental model. A PM defined the inputs, expected outputs, acceptance criteria, and edge cases. Engineering implemented the logic, QA validated the behavior, and if the tests passed, the requirement was considered complete.

That model worked because the system itself was fundamentally deterministic. The same input produced the same output. Workflows were linear. Execution paths were predictable. Requirements could describe behavior with a high degree of precision.

That assumption no longer holds in agentic AI systems.

In a multi-agent world, a PM is no longer specifying a fixed sequence of behaviors. Instead, the PM is defining goals, constraints, delegation boundaries, verification rules, escalation logic, and acceptable uncertainty. The requirement document stops being only a feature specification and starts becoming something much deeper: a proof system.

The PM’s role shifts from asking:

“What should the software do?”

to asking:

“How does the system prove the outcome is trustworthy?”

That is not a small evolution in product management. It is a fundamentally different discipline.

Why Traditional PRDs Fail in Agentic Systems

Traditional PRDs assume workflows are linear, execution paths are predictable, components behave consistently, and outputs are reproducible. Multi-agent systems violate all of those assumptions simultaneously.

An agent may choose different tools, retrieve different context, delegate tasks dynamically, synthesize information in different ways, or recover from failure using alternative reasoning paths. Two executions of the same task may generate different intermediate steps and different reasoning traces while still arriving at acceptable outcomes.

The workflow itself becomes adaptive.

That changes the nature of product definition entirely. Behavior is no longer fully specifiable. Correctness becomes probabilistic. A requirement document can no longer focus only on describing features because the central problem is no longer execution alone. The real problem becomes verification.

A useful way to think about this is through a simple example. Imagine a multi-agent financial research workflow. One agent retrieves market data, another summarizes company performance, another validates regulatory disclosures, and a final orchestration layer synthesizes recommendations. Each layer introduces uncertainty. A retrieval issue in the first step may quietly distort the reasoning quality of every downstream agent.

The challenge is no longer simply whether the agents complete the workflow. The challenge is proving that the reasoning chain remained trustworthy end to end.

That is the real shift.

Traditional PRDs describe features. Agentic systems require verification.

The Rise of Proof-Oriented Product Design

In deterministic software, the architecture often looked like this:

Requirements → Logic → Output

In agentic systems, the architecture changes fundamentally:

Goal → Agent reasoning → Verification → Controlled outcome

Verification becomes a first-class product layer.

Without it, agents hallucinate silently, workflows drift, delegation chains become opaque, and failures become extremely difficult to localize. This is one of the main reasons many early agentic products feel impressive in demos but unstable in production. They optimize execution before proof.

That distinction matters more than most teams realize.

A proof-oriented system is not a system that never fails. That is unrealistic in probabilistic environments. A proof-oriented system is one that can explain, validate, contain, and recover from failure when it occurs.

The requirement document therefore evolves from describing behavior to defining how trust is operationalized.

What a Proof-Oriented PRD Actually Contains

A modern AI PRD increasingly needs to define much more than feature behavior.

It starts with goal definition. Traditional requirements often describe tasks mechanically, such as “Generate financial report.” In agentic systems, that is insufficient. A stronger requirement might say:

“Generate a source-grounded financial risk summary with citation-backed claims, confidence scoring, and escalation for unsupported assertions.”

That single sentence changes the nature of the system. The goal no longer describes only the output. It embeds evidence expectations, verification requirements, and operational safeguards directly into the product definition.

The PRD must also define delegation boundaries. Multi-agent systems require explicit autonomy limits because agents naturally expand into areas they were never originally intended to control. A research agent may summarize findings. A compliance agent may validate policy references. A recommendation engine may suggest actions. Final approval, however, may still remain human.

These distinctions are not implementation details. They are product decisions that define operational trust.

Verification requirements become even more critical. Every meaningful output increasingly needs validation logic, confidence thresholds, proof artifacts, and cross-check mechanisms. That may include citation enforcement, SQL cross-validation, source grounding, reasoning trace storage, structured outputs, or external fact verification.

The important shift is that evidence itself becomes part of the product contract.

Without verification requirements, agentic systems eventually degrade into sophisticated guess generators.

Failure containment becomes equally important. Traditional PRDs often treat failure as an exception. Agentic systems require explicit failure design because failure is guaranteed, not hypothetical.

The product requirement therefore needs to specify fallback behavior, retry limits, escalation logic, refusal conditions, uncertainty thresholds, and safe degradation paths. A single sentence such as:

“If confidence drops below threshold, route to human review instead of autonomous execution.”

may ultimately matter more than entire pages of feature specification.

This represents one of the biggest mindset shifts in AI product management. The goal is no longer preventing all failure. The goal is preventing uncontrolled failure.

Traditional PRD vs. Proof-Oriented PRD

The following comparison highlights the fundamental shift in how product requirements must be structured for agentic systems.

Why Observability Becomes a Product Requirement

One of the most underappreciated shifts in agentic systems is the importance of observability.

If teams cannot inspect agent behavior, they cannot improve it.

Multi-agent systems often fail diffusely rather than visibly. One agent may retrieve weak context. Another agent may summarize incorrectly. A third agent may execute against flawed assumptions. Externally, the workflow may still appear coherent while internally compounding error across layers.

Without strong observability, teams end up fixing symptoms instead of causes.

That is why modern AI PRDs increasingly need to define logging expectations, reasoning trace visibility, tool-call telemetry, replayability, auditability, and evaluation hooks as part of the product definition itself.

Debugging an agentic workflow without observability is like debugging a distributed system without logs.

Why Evaluation Can No Longer Be an Afterthought

Traditional testing models assume relatively stable outputs. Agentic systems invalidate that assumption because models drift, retrieval quality changes, prompts evolve, workflows mutate, and user behavior shifts over time.

This means evaluation can no longer be treated as a final QA stage.

A proof-oriented PRD increasingly needs to define continuous evaluation strategies from the beginning. That includes golden datasets, adversarial testing, real-world evaluation, drift monitoring, production telemetry, and longitudinal accuracy tracking.

The system is never truly finished. It must continuously prove reliability over time.

That is a fundamentally different operational model from traditional software delivery.

The Deeper Product Shift

This changes the role of the PM significantly.

PMs are no longer only defining features, workflows, prioritization, and UX behavior. Increasingly, they are defining acceptable uncertainty, operational trust boundaries, evidence requirements, escalation policies, and risk allocation.

That starts looking much closer to systems design, governance engineering, reliability architecture, and operational control theory.

This is why AI product management increasingly overlaps with architecture decisions.

What appears to be an architecture problem is often actually a product problem in disguise.

Should the model be allowed to make this decision autonomously? Should outputs require verification before execution? Should uncertain cases escalate to humans? Should the workflow fail open or fail closed?

Those are not purely engineering questions anymore. They define trust, liability, operational risk, and customer confidence. In other words, they define the product itself.

You are no longer only designing experiences.

You are designing how the system earns trust.

Why Multi-Agent Systems Create Hidden Failure Cascades

Single-agent systems often fail visibly.

Multi-agent systems fail diffusely.

One agent retrieves weak context. Another synthesizes incomplete reasoning. A third acts on the flawed synthesis. The orchestration layer still produces an answer that sounds coherent and intelligent.

This creates a dangerous illusion. The system sounds confident. The reasoning chain appears sophisticated. The workflow looks operationally successful. But no layer has actually proven correctness.

The more advanced the orchestration becomes, the harder hidden failures become to localize.

That is one of the biggest emerging operational risks in agentic AI systems.

Proof-oriented requirements exist precisely to stop this type of failure cascade. They force teams to define where evidence must exist, where validation occurs, where uncertainty is tolerated, and where escalation becomes mandatory.

Without that structure, multi-agent systems become increasingly difficult to trust at scale.

The Emerging PM Skill

The next generation of strong AI PMs will not simply be the people who write the best prompts.

They will be the people who can define trust boundaries, design verification systems, operationalize evaluation, structure fallback logic, allocate uncertainty intelligently, and build systems that remain reliable under probabilistic behavior.

The competitive advantage shifts from:

“Can the agent do the task?”

to:

“Can the system prove the task was done correctly?”

That is a much harder problem.

And a far more defensible one.

As models become increasingly commoditized, execution capability alone becomes less differentiated. Trust infrastructure does not.

Bottom Line

In a multi-agent world, product requirements can no longer describe behavior alone.

They must define verification, accountability, evidence, containment, observability, escalation, and trust.

The PRD is evolving from a feature document into a proof document.

Because once agents can reason, retrieve, synthesize, delegate, and act autonomously, the real product question is no longer:

“What can the system do?”

It becomes:

“What can the system reliably prove?”

And in the agent era, trust will not come from intelligence alone.

It will come from systems that can prove their intelligence deserves to be trusted.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.