AI Governance RACI: Who Owns What in a Production AI System | AI Governance

This post is part of the Governance Operating Model pillar.

The most common governance failure in production AI is not missing policy. It is missing ownership.

A policy says: "Models must be reviewed when performance degrades." Who initiates the review? Who has authority to suspend the model? Who communicates the outcome to operations? Who signs off on the remediation?

If those questions are answered by informal convention — whoever notices, whoever shouts loudest — you do not have a governance operating model. You have an incident waiting to discover its first responders.

A RACI framework resolves this before the incident.

The five core governance activities

Every production AI system requires five governance activities to function safely. Each must be explicitly owned.

1. Model review — periodic assessment of whether a model continues to meet its performance contract.

2. Rule governance — the process for reviewing, updating, and retiring rules as business conditions and regulations change.

3. Tier management — decisions about autonomy tier changes, both upgrades and downgrades.

4. Incident response — the process for detecting, containing, investigating, and reporting AI-related incidents.

5. Audit preparation — assembling the evidence required to respond to regulatory inquiries, internal audits, or customer complaints.

The RACI framework for each activity

Model review

Model review is the governance activity most often owned by the team that built the model — which is precisely the wrong arrangement. The team that built the model has an incentive to find it acceptable.

Recommended ownership:

| Role | Function |

|---|---|

| Responsible | Model Risk or independent Data Science review |

| Accountable | Head of Model Risk or Chief Risk Officer |

| Consulted | ML Engineering (provides technical context), Business (provides outcome data) |

| Informed | Operations, Compliance, Product |

Triggers for unscheduled review: drift alerts, PSI breaches, incident postmortems, regulatory changes affecting the model's scope.

Output required: approved, conditional approval with remediation timeline, or suspension. Not "we reviewed it and it seems fine."

Rule governance

Rules change more often than models in high-stakes environments. Regulations change. Business conditions change. Fraud patterns change. A rule that was correct in January may be incorrect in June.

Rule governance is often nobody's job. It falls between engineering (who implements rules) and risk/compliance (who specified them originally). The result is stale rules that enforce yesterday's policy.

Recommended ownership:

| Role | Function |

|---|---|

| Responsible | Risk/Compliance (owns the policy) with Engineering (owns the implementation) |

| Accountable | Chief Risk Officer or Head of Compliance |

| Consulted | Legal, Product, Operations |

| Informed | ML Engineering, Model Risk, Leadership |

Required process: version-controlled rule changes with a documented rationale, a test plan, and a sign-off record. Not a Jira ticket.

Tier management

Tier changes — moving a workflow from one autonomy level to another — are governance events. They require evidence to justify, stakeholders to approve, and documentation to record.

In practice, tier changes often happen informally: an engineer flips a configuration, a product manager approves it in Slack, and nobody records what evidence justified the change.

Recommended ownership:

| Role | Function |

|---|---|

| Responsible | Engineering (proposes and implements) with Risk (validates evidence) |

| Accountable | Product or Operations leadership |

| Consulted | Compliance, Model Risk |

| Informed | Leadership, Audit |

Required evidence for a tier upgrade: documented performance metrics meeting the defined bar, circuit breaker test results, risk sign-off, and a record of who approved the change and when.

Incident response

Incident response is the activity most likely to be owned by "everyone" — which means nobody is in charge at the moment it matters.

Recommended ownership:

| Role | Function |

|---|---|

| Responsible | Engineering (containment), Operations (customer impact), Risk (exposure assessment) |

| Accountable | Operations or Engineering leadership (overall response) |

| Consulted | Legal, Compliance, Product |

| Informed | Leadership, Audit, Regulator (if required) |

The key principle: the first responder must have authority to act — including authority to suspend the model or downgrade the control tier — without waiting for a committee. Escalation is for communication and post-incident decisions, not for permission to stop a running system.

Audit preparation

Audit preparation is reactive in most organizations — triggered by an audit request. In well-governed systems, it is an ongoing operational function that maintains a continuously audit-ready evidence package.

Recommended ownership:

| Role | Function |

|---|---|

| Responsible | Compliance (coordinates), Engineering (assembles technical evidence), Model Risk (provides model documentation) |

| Accountable | Chief Compliance Officer or Head of Risk |

| Consulted | Legal, Product |

| Informed | Leadership |

What continuous audit readiness looks like: a maintained decision trace that can produce any record within hours, not days; up-to-date model documentation; a log of all rule changes and tier decisions; incident postmortems that are already documented and filed.

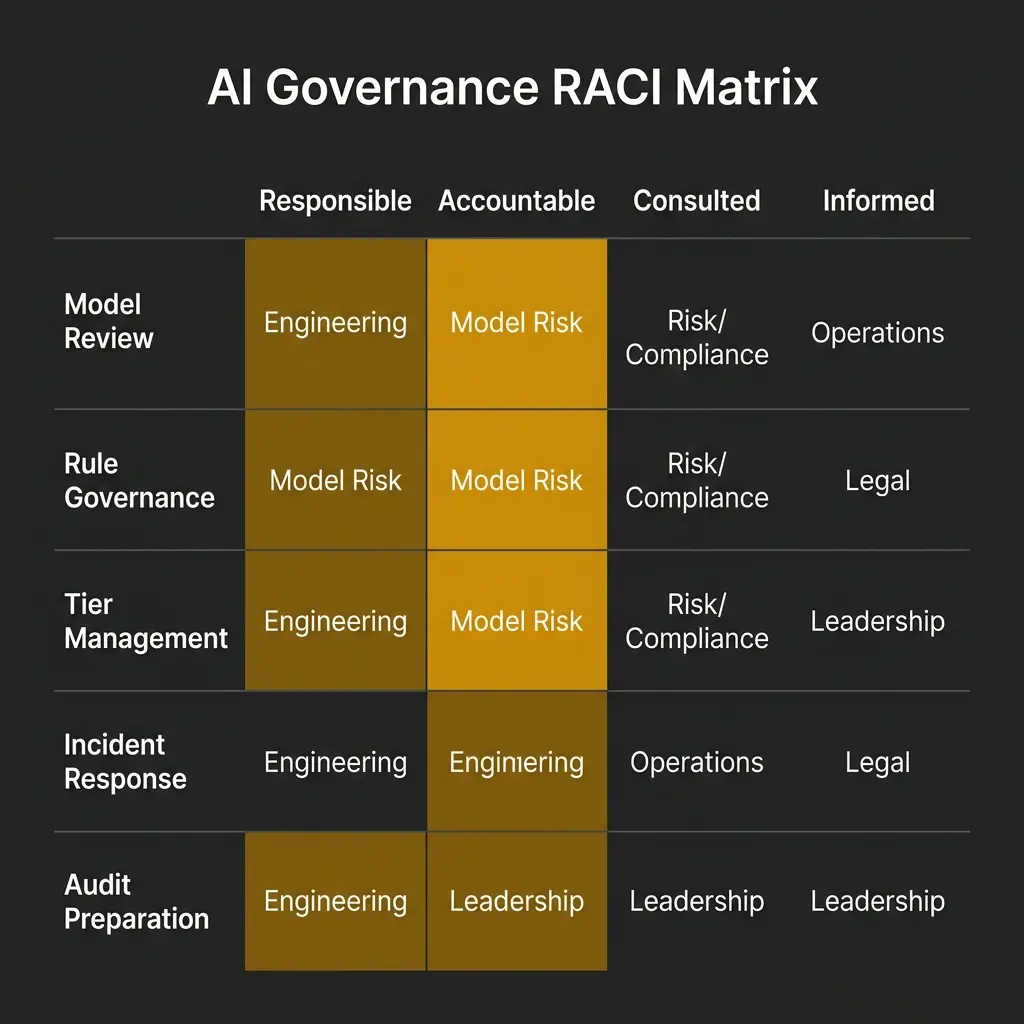

The full RACI at a glance

| Activity | Responsible | Accountable | Consulted | Informed |

|---|---|---|---|---|

| Model review | Model Risk | CRO | ML Eng, Business | Ops, Compliance, Product |

| Rule governance | Risk/Compliance + Eng | CRO | Legal, Product, Ops | ML Eng, Leadership |

| Tier management | Eng + Risk | Product/Ops Leadership | Compliance, Model Risk | Leadership, Audit |

| Incident response | Eng + Ops + Risk | Ops/Eng Leadership | Legal, Compliance | Leadership, Regulator |

| Audit preparation | Compliance + Eng + Model Risk | CCO | Legal, Product | Leadership |

Three signs your RACI is failing

1. The same incident produces a different response each time. First responders vary, communication paths vary, postmortem formats vary. The RACI is not functioning as an operational guide — it is a document that nobody references.

2. Governance activities happen after they should have. Model reviews triggered by incidents rather than schedules. Rule updates triggered by compliance findings rather than regular governance cycles. The RACI exists but is not enforced.

3. Disputes about ownership during incidents. "I thought Risk was responsible for this" is a sign that the RACI was not written, was not communicated, or has not been updated to reflect current team structures.

Related in this pillar

- Governance Operating Model: The full framework for translating AI policy into operational system behavior.

- The AI Governance Playbook: How to build the operational structure that the RACI governs.

- The AI Incident Golden Hour: How incident response functions when the RACI is running correctly.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.