Autonomy Tier Assignment: A Practical Decision Guide for AI Teams | AI Governance

This post is part of the Autonomy and Escalation pillar.

Most teams assign autonomy tiers informally. A model goes live with full automation. Someone raises a concern. A review step is added. The review step becomes a bottleneck. The review step gets quietly removed.

This is tier drift. It is the most common governance failure in production AI, and it is almost always invisible until something goes wrong.

This post is a practical guide to assigning autonomy tiers correctly — using explicit criteria, documented evidence, and a defined governance process that applies equally to tier upgrades and tier downgrades.

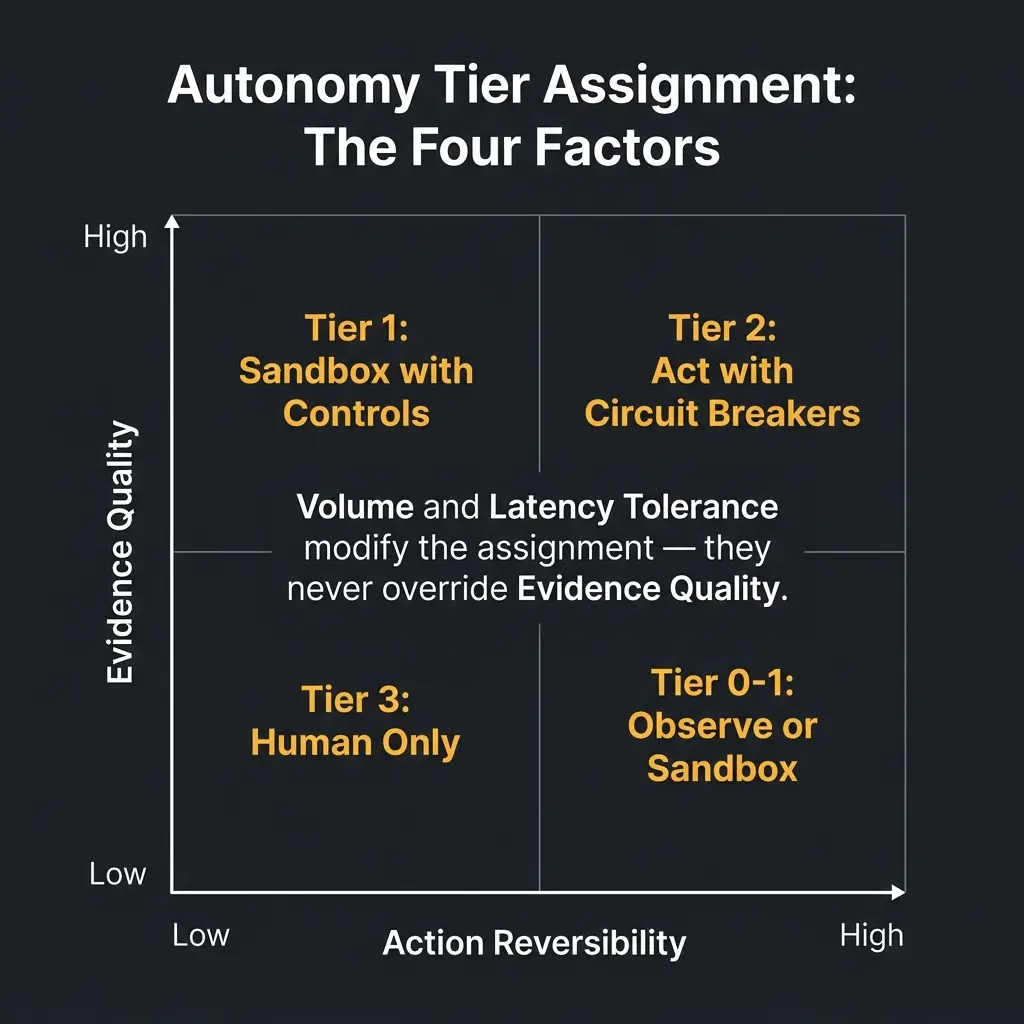

The four factors of tier assignment

Every autonomy tier decision rests on four factors.

1. Reversibility. If the system makes a wrong decision, what is the cost to undo it? A routing error that sends an email to the wrong queue is fully reversible. A credit denial that a customer acts on is not. A fraud block that prevents a legitimate purchase during a time-sensitive event cannot be fully undone.

Higher reversibility supports higher autonomy. Lower reversibility demands lower autonomy or stronger circuit breakers.

2. Volume and frequency. A high-volume, high-frequency workflow cannot rely on human review of individual decisions. Tier 0 (observe only) for a system making 500,000 decisions per day effectively means no governance at all — the human cannot keep up.

High volume pushes toward higher tiers with automated controls. Lower volume supports human review at lower tiers.

3. Human review latency tolerance. If a decision must be made in 200 milliseconds, human-in-the-loop is not a viable design. If a decision can wait 48 hours, human review is practical.

Real-time decisions push toward higher tiers. Asynchronous workflows support lower tiers with higher human involvement.

4. Evidence quality. The most important factor — and the one most often ignored. Higher tiers require demonstrated performance in lower tiers, not confidence about expected performance. The only path to Tier 2 is through Tier 1. The only path to Tier 1 is through Tier 0.

The tier assignment decision guide

For any AI-influenced workflow, answer these four questions and score them:

| Factor | Low Score | High Score |

|---|---|---|

| Reversibility | Action is irreversible or costly to undo | Action is fully reversible within minutes |

| Volume | Low volume; human review is practical | High volume; human review per decision is infeasible |

| Latency tolerance | Decision must be made in milliseconds | Decision can wait hours or days |

| Evidence quality | No production performance data | Demonstrated stable performance in prior lower tier |

Scoring guide: - All four factors score Low → Tier 3 (Human Only) - Reversibility and Evidence quality score Low → Tier 0 or 1 regardless of volume - All four factors score High → Tier 2 eligible (subject to circuit breaker design and sign-off)

The key constraint: Evidence quality can never be substituted. High volume, fast decisions with great reversibility still cannot operate at Tier 2 without demonstrated performance data from Tier 1.

Worked examples

Customer support message classification

The workflow: An NLP model classifies incoming customer messages into categories (billing, technical, complaint, general) and routes them to the appropriate queue.

Factor scoring: - Reversibility: High — a misrouted message can be re-routed with minimal impact - Volume: High — tens of thousands of messages daily; human review per message is infeasible - Latency tolerance: Medium — customers expect routing in seconds, not milliseconds - Evidence quality: Medium — if this is a new deployment, only pilot data exists

Tier assignment: Tier 1. The system routes automatically. Spot-check sampling monitors routing accuracy. Misrouted messages are tracked as a quality metric. Tier 2 upgrade eligible after 60 days of stable production performance with defined accuracy thresholds met.

Loan application auto-approval

The workflow: A risk model auto-approves low-risk loan applications below a defined dollar threshold and declines applications below the minimum score.

Factor scoring: - Reversibility: Low — an approved loan that is funded cannot be reversed; a declined applicant may act on the decision - Volume: Medium — hundreds per day; individual human review is feasible but expensive - Latency tolerance: High — applicants expect decisions in hours, not milliseconds - Evidence quality: Depends on deployment stage

Tier assignment: Reversibility is the constraint. Even with good evidence quality, low reversibility limits auto-approval to Tier 2 maximum — and requires: - Tested circuit breakers (default rate, FPR on declines) - Regulatory compliance for adverse action documentation - Full decision trace for every auto-approved and auto-declined application - Explicit risk sign-off on the score thresholds

Starting point: Tier 0 (human reviews model recommendations) → Tier 1 (auto-approve only in a narrow, well-studied score band) → Tier 2 (expanded auto-approval with documented circuit breakers).

Real-time fraud blocking

The workflow: A fraud model blocks transactions above a high-confidence threshold in real time.

Factor scoring: - Reversibility: Mixed — a fraudulent transaction blocked is good; a legitimate transaction blocked is a customer impact event (partially reversible via dispute, but with cost) - Volume: Very High — millions of transactions per day; human review per transaction is not feasible - Latency tolerance: Very Low — transactions must be processed in milliseconds - Evidence quality: Required before any blocking tier

Tier assignment: The volume and latency constraint eliminates Tier 0 and Tier 3 for the primary path. The reversibility of legitimate blocks requires circuit breakers. The correct assignment is Tier 2 for high-confidence blocks, Tier 1 for medium-confidence challenges — with the circuit breaker as a first-class governance requirement, not an afterthought.

The tier upgrade process

Tier upgrades must follow a defined process. They are not configuration changes.

Required steps for any tier upgrade:

-

Evidence package: performance data from the current lower tier, covering at least 60 days of production operation, including the specific metrics that define the performance bar for the target tier.

-

Circuit breaker design: documented auto-downgrade conditions for the target tier, tested in a staging environment, with the test results included in the evidence package.

-

Risk sign-off: written approval from the designated risk owner (defined in the governance RACI), recording the rationale for the upgrade and the conditions under which it would be reversed.

-

Documentation: a record in the system's governance log: what tier was assigned, when, on what evidence, and who approved it.

Without these four steps, a tier "upgrade" is an undocumented assumption — not a governance decision.

Keeping tiers current

Tier assignments must be reviewed on a defined cadence — not just at deployment.

Review triggers: - Quarterly scheduled review for all Tier 2 deployments - After any incident that trips a circuit breaker - After any significant model retraining - After any regulatory change affecting the workflow's domain - After any material change in volume or decision type composition

The question at each review is not "is the system performing well?" It is: "does the current evidence still justify this tier assignment, and is the circuit breaker threshold still correctly calibrated?"

Related in this pillar

- Autonomy and Escalation: The full pillar on designing autonomy and escalation architecture.

- Control Tiers for AI-enabled Processes: The detailed guide to the four control tiers and their governance requirements.

- AI Escalation Protocols: What happens at runtime when a tier change is triggered.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.