How to Write AI Component Contracts: A Practical Guide | AI Governance

This post is part of the AI Accountability Architecture pillar.

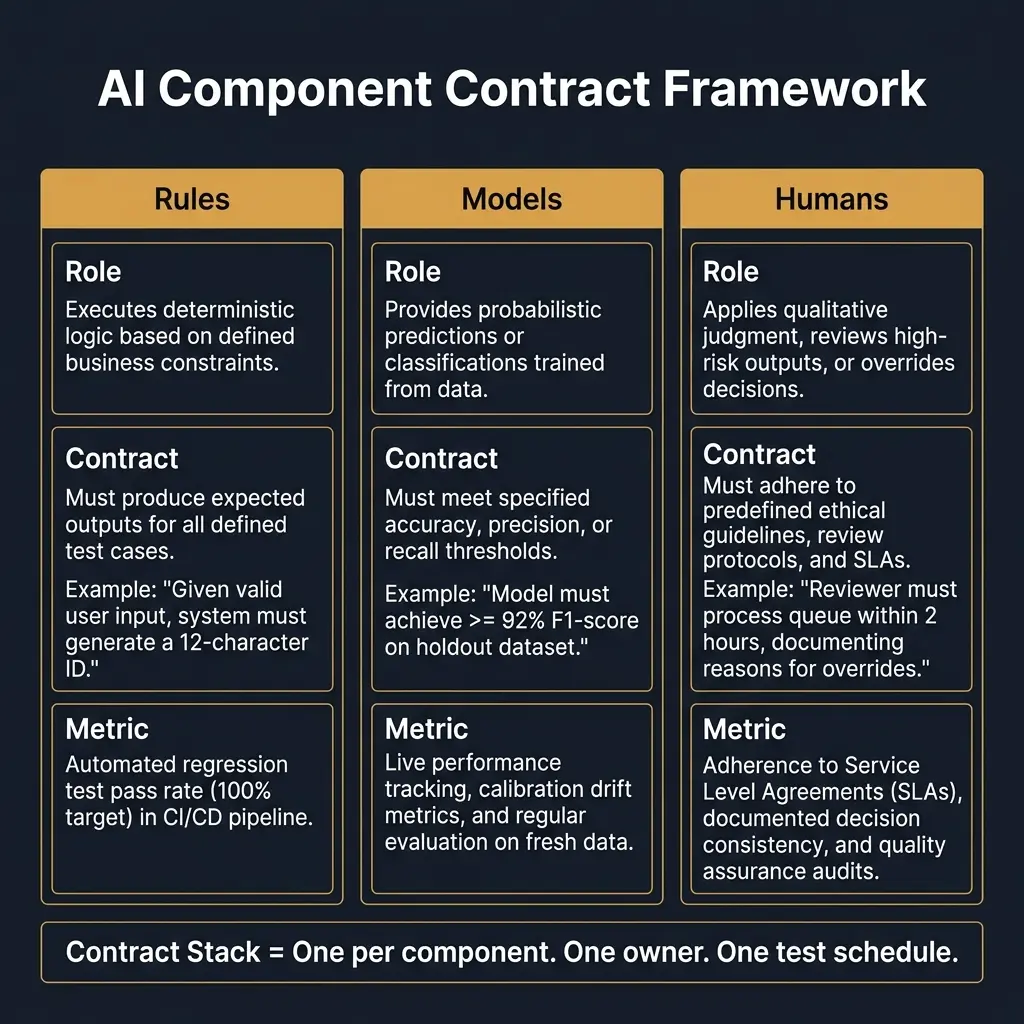

Writing an AI component contract sounds like an abstract governance exercise. It is not. It is the step that separates AI systems that can be fixed from AI systems that can only be rolled back.

When something goes wrong — and it will — the first question is always: which part of the system failed? Rules? The model? A human? The routing logic between them?

If no contracts exist, that question is answered by debate. If contracts exist, it is answered by evidence.

This post is a practical guide to writing contracts for each component of a composite AI system. You can start with one component today.

What makes a good component contract

A contract is not a specification. A specification describes how something is built. A contract describes what correct behavior looks like — and how you will know, in production, whether that behavior is occurring.

Three properties make a contract useful:

Testable. The contract must be evaluable with data, not with judgment. "The model should perform well" is not a contract. "The model must rank higher-risk cases above lower-risk cases at least 90% of the time on the monthly holdout set" is a contract.

Specific. The contract must name the component it applies to and the condition it governs. A contract that applies to "the system" is not a contract — it is an aspiration.

Owned. Every contract must have a function that is responsible for monitoring it and acting when it is violated. A contract with no owner is not enforced.

Writing contracts for rules

Rules are the easiest component to contract, because they are deterministic. Either the rule fired or it did not. Either the input satisfied the condition or it did not.

The structure of a rules contract:

"Given input condition [X], rule [R] must always [action]. This rule must fire for [Y]% of traffic that meets condition [X]. Violations must be detectable within [Z] minutes."

Worked examples:

Credit eligibility rule:

"Any applicant with fewer than 12 months in business must be declined before the model is called. This rule must fire for 100% of applications meeting this criterion. Violations must be detected in the next batch monitoring cycle."

Fraud blocking rule:

"Any transaction originating from an IP address on the active sanctions list must be blocked before scoring. Zero exceptions. Violations must trigger an immediate alert to the fraud operations team."

Tier routing rule:

"Any case with a model confidence score below 0.6 must be routed to human review, not auto-approved. This routing must apply consistently across all channels (web, API, partner). Violations must be logged as routing anomalies."

What to test: - Coverage: does this rule see all the traffic it should see? - Consistency: does it behave the same in all channels? - Bypass detection: is there a path through the system that avoids this rule?

Writing contracts for models

Model contracts are harder than rule contracts, because models are probabilistic. The contract must specify performance thresholds, not exact behaviors.

The structure of a model contract:

"Given valid, normalized inputs in [scope], model [M] must produce outputs that [performance criterion] when evaluated on [test set] at frequency [F]. The model must not be used for decisions outside its defined scope. The model must be reviewed when [drift condition] is detected."

Worked examples:

Risk scoring model:

"Given valid lending applications from the defined eligibility population, the risk model must rank higher-risk cases above lower-risk cases with an AUC of at least 0.72 on the monthly holdout set. The model must not be used for applications from segments excluded from the training population. Review is triggered when AUC drops below 0.70 or when PSI exceeds 0.2 on any key input segment."

Fraud detection model:

"The fraud model must maintain a false positive rate below 1.5% on non-fraudulent transactions in any 7-day rolling window. If the false positive rate exceeds 2%, auto-block behavior must be automatically downgraded to challenge-only mode until the model is reviewed."

Document classification model:

"Given documents in the defined format and language categories, the classifier must achieve at least 94% precision on the high-confidence output tier. Documents falling below the confidence threshold must be routed to human review and must not receive an automated classification output."

What to test: - Discriminative power on fresh data (not the training set) - Calibration — do scores reflect actual probabilities? - Segment-level drift — does performance degrade faster in specific subgroups? - Scope violations — is the model being called on inputs outside its defined population?

Writing contracts for humans

Human contracts are the most neglected component. The common assumption is that humans are flexible — they can adapt. That is true for individual decisions. It is not true for a governance architecture that needs to measure and attribute behavior.

The structure of a human contract:

"When presented with [trigger], human reviewer [role] must [action] within [time limit]. Overrides must be documented using [reason code schema]. Override patterns must be reviewed at [frequency]."

Worked examples:

Underwriting review contract:

"When a loan application is routed for manual review, the reviewing underwriter must select a decision and a reason code from the approved list within 4 business hours. All overrides of model recommendations must be documented with a reason code. Override rates by underwriter must be reported monthly to the risk function."

Fraud escalation contract:

"When a fraud alert at Tier 2 severity is escalated, the fraud analyst must acknowledge within 30 minutes and resolve within 2 hours. The analyst must document findings and select a case disposition code. Cases resolved without a disposition code must be flagged as a process violation."

AI review board contract:

"The model risk committee must review any model that triggers a drift alert within 5 business days. Reviews must produce one of three outputs: approved for continued operation, conditional approval with a remediation timeline, or suspension pending remediation."

What to test: - Compliance rate: what percentage of required overrides have valid reason codes? - Latency: are SLA requirements being met? - Override direction: are human reviewers systematically more or less conservative than the model, and why?

Assembling the contract stack

A contract stack is the full set of contracts for a single decision flow — one per component, linked to shared metrics and a shared test schedule.

| Component | Contract Summary | Owner | Monitoring Frequency |

|---|---|---|---|

| Eligibility rules | Catch all ineligible applicants before model | Risk/Compliance | Daily batch |

| Risk model | AUC ≥ 0.72, PSI < 0.2, review on breach | Model Risk | Monthly holdout |

| Underwriter review | Disposition within 4hr, reason code required | Operations | Weekly report |

| Routing logic | Low-confidence cases to human, not auto-approved | Engineering | Real-time monitor |

When a contract is violated, the stack tells you where to look first. When a metric drifts, the stack tells you which contract is at risk.

Starting with one contract

You do not need to write all contracts at once. The fastest way to start is to pick the single most consequential component in your highest-risk workflow and write one contract for it this week.

Ask: if this component behaved incorrectly, would we know? If yes, how long would it take us to know? If it would take weeks, you do not have a functional contract — you have an assumption.

Write the contract. Attach a metric. Name an owner. Then do the next one.

Related in this pillar

- AI Accountability Architecture: The full framework for designing accountable AI systems.

- Composite Accountability: How to assign contracts across rules, models, and humans in a composite system.

- AI Audit Trails: How to instrument your system so contracts can be verified with evidence.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.