The AI Product Risk Stack: Model, System, Workflow

For AI product managers who need to explain risk clearly to executives, engineers, and auditors.

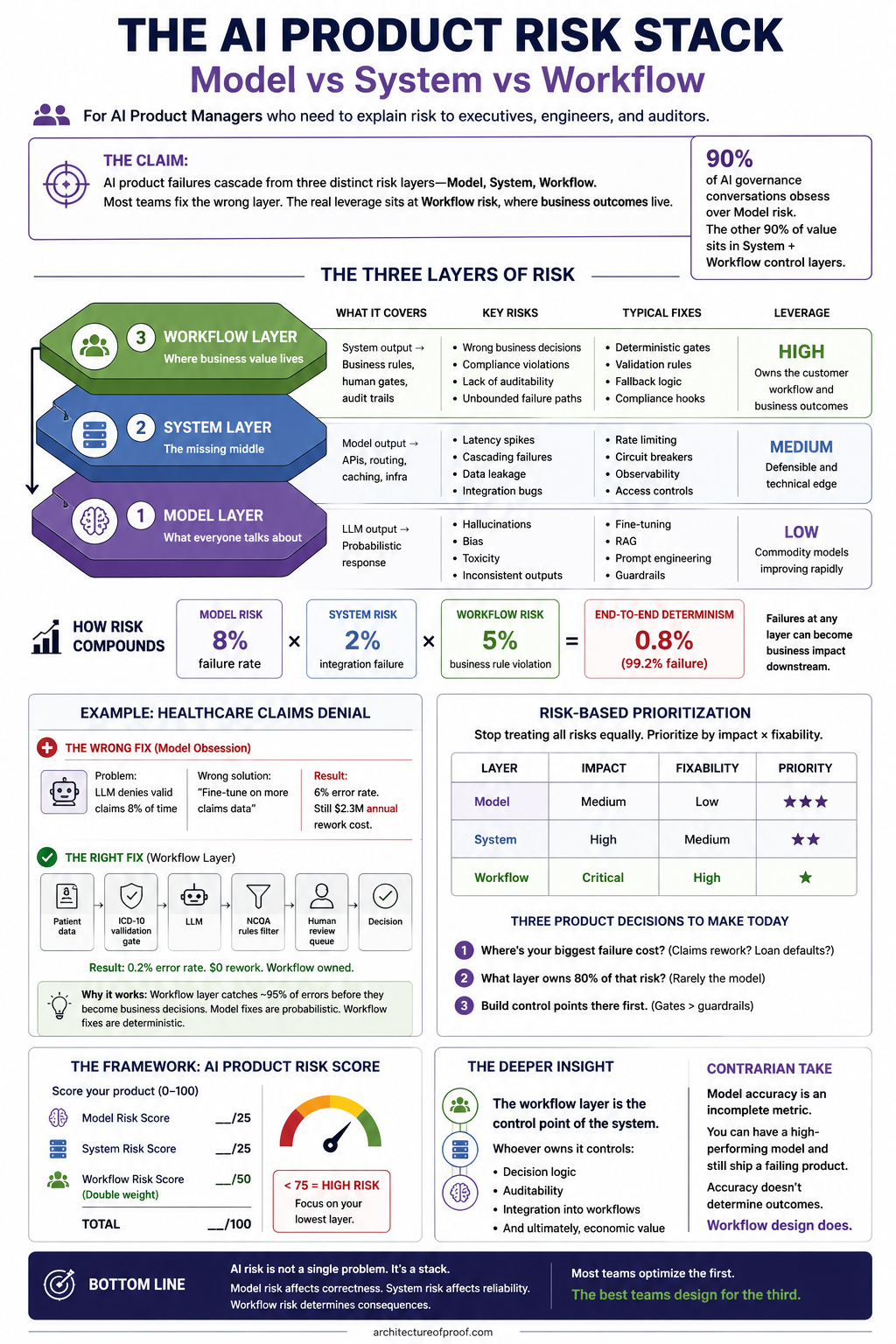

One of the easiest mistakes to make in AI product work is to assume that risk starts and ends with the model. It doesn’t. A model can be inaccurate, inconsistent, or biased, but that is only one part of the story. In practice, most AI failures show up across three layers: the model itself, the system that delivers it, and the workflow that turns its output into a business action.

That distinction matters because most AI conversations still focus almost entirely on the model layer. Teams ask how to reduce hallucinations, how to improve prompting, how to fine-tune, and how to make outputs more reliable. Those are important questions, but they are only part of the problem. Users do not experience “model error” in the abstract. They experience consequences: a claim gets denied, a payment gets blocked, a customer gets misclassified, or a decision is made incorrectly.

graph TD

L3[<b>Layer 3: Workflow Risk</b><br/>Business Logic & Human Gates]

L2[<b>Layer 2: System Risk</b><br/>APIs, Routing, & Infra]

L1[<b>Layer 1: Model Risk</b><br/>LLM Output & Probability]

L1 --> L2

L2 --> L3

L3 --> Outcome([Business Outcome])

style L3 fill:#e8f5e9,stroke:#2e7d32,stroke-width:2px

style L2 fill:#e3f2fd,stroke:#1565c0

style L1 fill:#f3e5f5,stroke:#7b1fa2

Why this framing matters

The reason this framework is useful is that it helps teams see where risk actually becomes real. A model can say something wrong, but if the system catches it before it reaches the user, the mistake may never matter. If the system is weak, the model’s mistake can move downstream. And if the workflow is poorly designed, a small error can become a real business decision with real consequences.

This is why AI teams need to stop treating every problem as a model problem. Sometimes the model really is the issue. Sometimes the system around it is the issue. But often, the biggest problem is that the workflow lets uncertainty turn into action too easily. That is where product thinking becomes crucial.

Model risk

Model risk is the layer everyone talks about first because it is the most visible. This is where hallucinations, bias, toxicity, and inconsistent outputs show up. It is the part of the system where the model says something wrong, says it confidently, or behaves unpredictably across similar inputs.

The usual responses are also familiar: fine-tuning, better prompts, retrieval, and better training data. Those fixes matter, and they often improve performance. But they are also becoming more commoditized. Many teams can now make the model better in similar ways, which means model improvement alone rarely creates lasting product advantage.

That is why model risk should be treated as necessary but not sufficient. It is real, but it is only the first layer.

System risk

System risk is the layer between the model and the business workflow. It is the part that determines whether the AI product behaves reliably in practice. Even if the model is good, the product can still fail if the surrounding system cannot deliver the output safely and consistently.

This layer includes issues like latency spikes, cascading failures, integration bugs, access problems, and data leakage. The fixes here are usually infrastructure-oriented: rate limiting, circuit breakers, observability, access controls, and guardrails around tool use and execution. These are important because they make the system more stable and more trustworthy.

System risk matters a lot, but it still isn’t the final layer of value. It protects the path. It does not determine the business outcome on its own.

Workflow risk

Workflow risk is where the business consequence actually happens. This is the layer that turns a model output into a real-world action: an approval, a denial, a recommendation, a payment, a case escalation, or a customer-facing decision.

This is also where the stakes become highest. A workflow can create compliance violations, audit failures, unbounded exception paths, or decisions that are difficult to explain later. At this point, a model mistake is no longer just a technical issue. It becomes a business outcome.

The fixes here are different from the model layer. You need deterministic gates, validation rules, fallback logic, and human-in-the-loop paths. In other words, you need structure around the decision, not just better predictions. This is where AI products become trustworthy in practice.

How the stack compounds

The layers do not exist independently. They compound.

A model error that slips through the system layer does not remain a model error for long. It becomes part of a workflow. Once it reaches the workflow layer, it can turn into a decision that creates cost, rework, liability, or user harm.

A better way to think about this is as a series of filters. Each layer has a chance to catch, contain, or redirect an error. If the model layer fails, the system layer may still catch it. If the system layer fails, the workflow layer still has to prevent the mistake from turning into a harmful action. The earlier an issue is caught, the cheaper it is to fix.

graph TD

U([User Intent]) --> M{1. Model Risk}

M -- Probabilistic Error --> S

M -- Success --> S{2. System Risk}

S -- Reliability Failure --> W

S -- Success --> W{3. Workflow Risk}

W -- Consequence --> Impact([Business Failure])

W -- Success --> Outcome([Safe Outcome])

style M fill:#f3e5f5,stroke:#7b1fa2

style S fill:#e3f2fd,stroke:#1565c0

style W fill:#e8f5e9,stroke:#2e7d32,stroke-width:2px

style Impact fill:#ffebee,stroke:#c62828

style Outcome fill:#e8f5e9,stroke:#2e7d32

That means model improvements reduce the raw error rate, system design controls how errors move through the product, and workflow design determines how much those errors matter in the end.

Example: healthcare claims denial

Healthcare claims denial is a good example because it is both high volume and high stakes. A lot can go wrong, and the consequences are real.

If you take the model-first approach, you might focus on improving accuracy from 8 percent wrong to 6 percent wrong. That sounds like progress, and technically it is. But the remaining errors can still create real problems. They can trigger rework, delay care, create liability, and frustrate both patients and providers.

In other words, a better model does not necessarily create a better product.

Now compare that to the workflow-first approach. In that version, invalid inputs are blocked before they reach the model. Outputs are checked against policy. Edge cases are routed to fallback paths. Uncertain cases are sent to human review. The model does not need to be perfect because the workflow is designed to contain its mistakes.

That is the more mature way to think about AI product design. You do not need perfection from the model. You need reliability from the system as a whole.

| Layer | Impact | Fixability | Priority |

|---|---|---|---|

| Model | Medium | Low | ★★★ |

| System | High | Medium | ★★ |

| Workflow | Critical | High | ★ |

What this means for product managers

For product managers, this changes how risk should be discussed and prioritized.

First, not all risks are equal. Model risk, system risk, and workflow risk differ in impact, controllability, and business relevance. A model hallucination may be annoying. A workflow failure may be expensive. Those are not the same problem.

Second, the most important question is not, “How do we improve the model?” It is, “Where does a model error become a real business outcome?” That is the point where product decisions matter most. That is where validation rules, fallback paths, approval gates, and review steps should be designed.

Third, product teams need to build control points where uncertainty becomes action. If a model output is going to trigger a payment, a denial, a routing decision, or a legal consequence, then the product needs a clear way to inspect, constrain, or stop that action before it happens. That is where product design becomes risk design.

A simple lens for evaluating AI products

One practical way to evaluate an AI product is to ask how strong it is across three dimensions:

- Model robustness.

- System reliability.

- Workflow determinism.

If any one of those is weak, the product can still fail. But if the workflow layer is weak, it often doesn’t matter how good the model is. You may still have a system that looks impressive in a demo and unreliable in production.

That is why the workflow layer matters so much. It is not just another technical layer. It is the control point where decisions happen, where auditability is created, and where trust is either built or lost.

The deeper insight

The deeper insight is that the workflow layer usually owns the value.

Model providers improve the engine. Infrastructure teams improve delivery. Product teams decide how the product behaves when it matters. That means the real strategic leverage often sits in the place where model output becomes business action.

Whoever owns that layer controls the decisions, the audit trail, the user experience, and eventually the economics. That is why AI products are not won purely by better models. They are won by better system and workflow design.

Full Resource Map

The AI Product Risk Stack is a complex framework involving multiple layers of technical and operational control. We have provided a high-fidelity visual map of this stack for use in strategic planning and engineering reviews.

Click to expand the full resolution map

Bottom Line

AI risk is not one problem. It is a stack.

Model risk affects correctness.

System risk affects reliability.

Workflow risk determines consequences.

Most teams spend too much time optimizing the first layer. The best teams spend more time designing the third.

That is where AI products become durable, explainable, and useful in the real world.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.