Risk Allocation as a Product Responsibility: The Forensic Audit

The Diagnosis

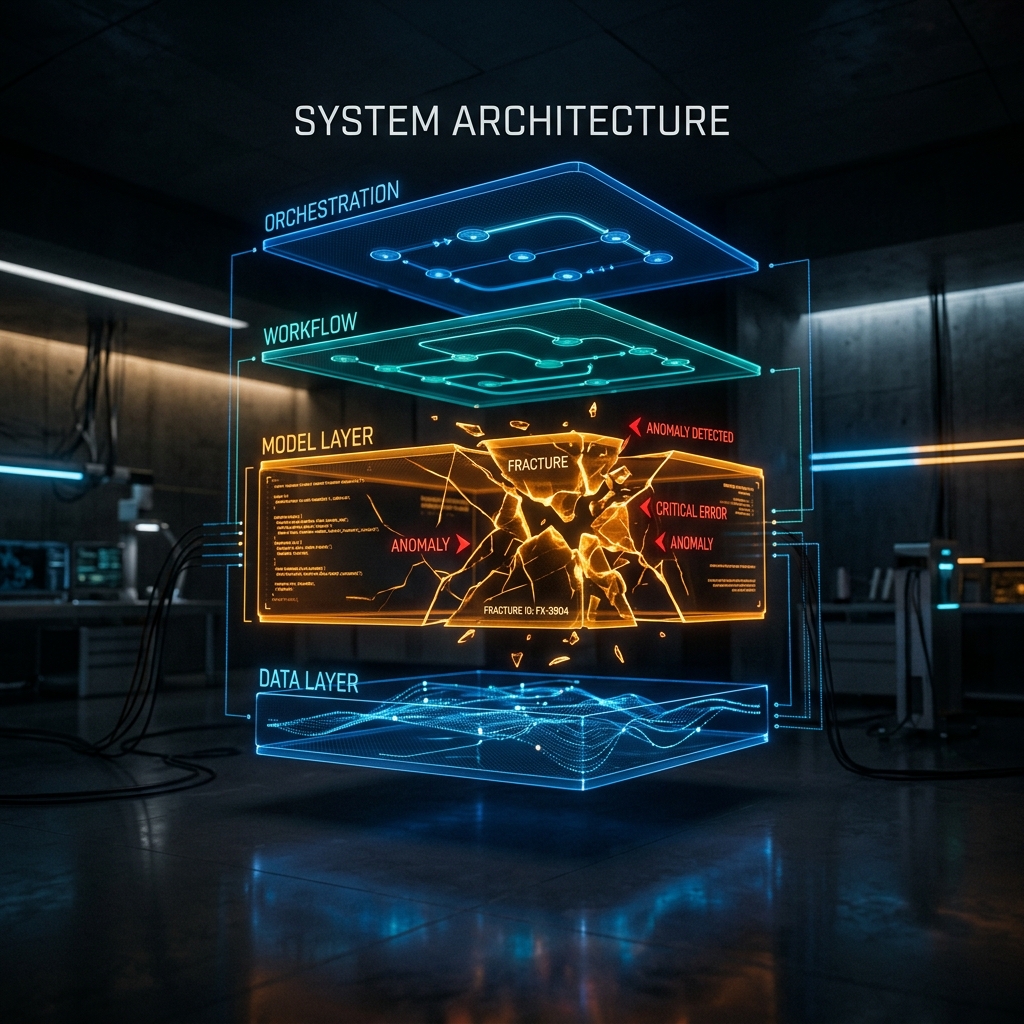

Most AI failures don’t come from a single “bad model” moment. They emerge from a chain of smaller breakdowns that compound across the system—the model, the orchestration layer, and the workflow itself. By the time a failure becomes visible, the root cause is often somewhere else entirely.

You need to localize the failure to fix the product.

The Core Claim

The model is often blamed for problems that actually occur one layer up or one layer down. That confusion is expensive. It leads teams to tune prompts when they should be fixing retrieval, swap models when the issue is routing, or redesign workflows when the real problem is brittle output handling.

You end up working on symptoms instead of fixing systems.

The Risk Stack

AI product failures can be understood across three distinct layers.

At the most visible level sits model error. This is what everyone notices first: hallucinations, inconsistent answers, weak extraction, and poor reasoning on edge cases. These failures are obvious, but they are also misleading. They tell you what the model did wrong, but not why the product failed.

Beneath that sits system error—the layer where many AI products quietly break. This includes bad retrieval, incorrect prompt assembly, context overload, and routing requests to the wrong model. It also includes retry loops that unintentionally amplify cost and latency, and missing timeout or fallback logic. In many cases, the model is actually capable, but the surrounding system turns a reasonable output into a broken experience. What looks like a model issue is often an orchestration issue.

At the top of the stack sits workflow error, where failures become expensive. Here, the model may produce a technically acceptable output, but the outcome still fails the business task. The system may accept an answer that should have been validated, or send a user down the wrong path because no control gate exists. In some cases, the product relies on the model to make decisions that should have been deterministic from the start.

The fact is: the model can be “correct,” and the product can still fail.

How Failures Cascade

A typical failure does not happen in isolation. It unfolds as a sequence:

graph TD

A[User Request] --> B[System: Weak Retrieval]

B --> C[Model: Incomplete Answer]

C --> D[Workflow: Accepted without Verification]

D --> E[Outcome: Visible Product Failure]

style B fill:#fff3e0,stroke:#ff9800

style C fill:#fff3e0,stroke:#ff9800

style D fill:#fff3e0,stroke:#ff9800

style E fill:#ffebee,stroke:#f44336,stroke-width:2px

The visible failure appears at the end of this chain, but the root cause often sits near the beginning. That is why forensic thinking matters. Without it, teams fix the last visible break instead of the first meaningful one.

A Practical Diagnostic Approach

When something breaks, the instinct is to blame the model. That instinct is usually wrong, or at least incomplete.

A better approach is to diagnose failures in sequence:

- Examine the model: Was the output factually incorrect, incomplete, or did it misunderstand the request?

- Examine the system: Was the right context provided? Was the prompt constructed correctly? Was the correct model selected? Did retries or truncation introduce unintended behavior?

- Examine the workflow: Should the model have been allowed to make that decision in the first place? Was a validation step missing? Was the downstream system too permissive in accepting outputs?

This order matters because it prevents teams from optimizing the wrong layer.

Why PMs Need This Lens

Product managers often hear that “the model is bad” and stop there. The real issue is rarely just the model; it’s how responsibility is distributed across the system.

Instead of accepting the "bad model" narrative, ask:

- Is this failure local or systemic?

- Is the fix at the prompt level or the architecture level?

- Most importantly: Should the system rely on the model here at all?

These are not just technical questions. They are decisions about where risk lives in your product. You are pulling what look like architecture decisions into product thinking. The most important question you can ask is: “Where in the stack does the failure originate—and which layer should absorb the risk?”

Architecture determines how the system is built. Product determines where the system is allowed to fail.

The Risk Allocation Matrix

Reliability is not about making every layer perfect; it is about knowing where to invest in control based on the nature of the task. We can map the layers of the risk stack across two axes: Determinism (how predictable the output is) and Failure Cost (the business consequence if it breaks).

quadrantChart

title Risk Allocation & Control Strategy

x-axis Low Determinism --> High Determinism

y-axis Low Failure Cost --> High Failure Cost

quadrant-1 High Control Needed

quadrant-2 Safeguards & Monitoring

quadrant-3 Low Risk Zone

quadrant-4 Process Efficiency

Base Model Inference: [0.2, 0.8]

System Routing: [0.5, 0.5]

Workflow Logic: [0.9, 0.9]

Navigating the Matrix

The matrix reveals a fundamental truth about AI product strategy: as you move toward the top-right, the need for explicit controls, validation, and risk management increases.

- Workflow Logic (Top-Right): This should be the most tightly controlled area. Because it is highly deterministic and carries high failure costs, it is where you should use hard rules, validation schemas, and deterministic gates.

- Base Model Inference (Top-Left): This is high stakes but inherently less deterministic. You cannot manage this with hard rules alone. Instead, it requires safeguards: evaluation sets, guardrails, and real-time monitoring to contain the variance.

- System Routing (Center): This layer acts as the bridge, managing medium levels of both risk and complexity.

By mapping your product features onto this matrix, you can decide whether to fix a problem by improving the model (moving left-to-right) or by adding workflow controls (moving bottom-to-top).

The Control Takeaway

Forensic diagnosis is all about identifying the control point. Once you locate where the system breaks, you can decide how to intervene. You might constrain the input, validate the output, reroute the system, introduce a fallback, or redesign the workflow entirely.

This is how AI systems become reliable—not by improving the model alone, but by structuring the system around its weaknesses.

Bottom Line

If you do not separate model error from system error from workflow error, you will continue treating symptoms instead of causes.

The forensic audit is the discipline of finding the real break. Separating model, system, and workflow errors is only the first step. The real work is deciding which layer owns the consequence of failure. That decision defines how reliable your system is, how expensive failures become, and whether your product can scale beyond a demo.

Risk allocation determines how your product behaves when the model is wrong. Everything else is just how you enforce that decision.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.